AI Agents for CRE Lending Compliance: Controls That Hold

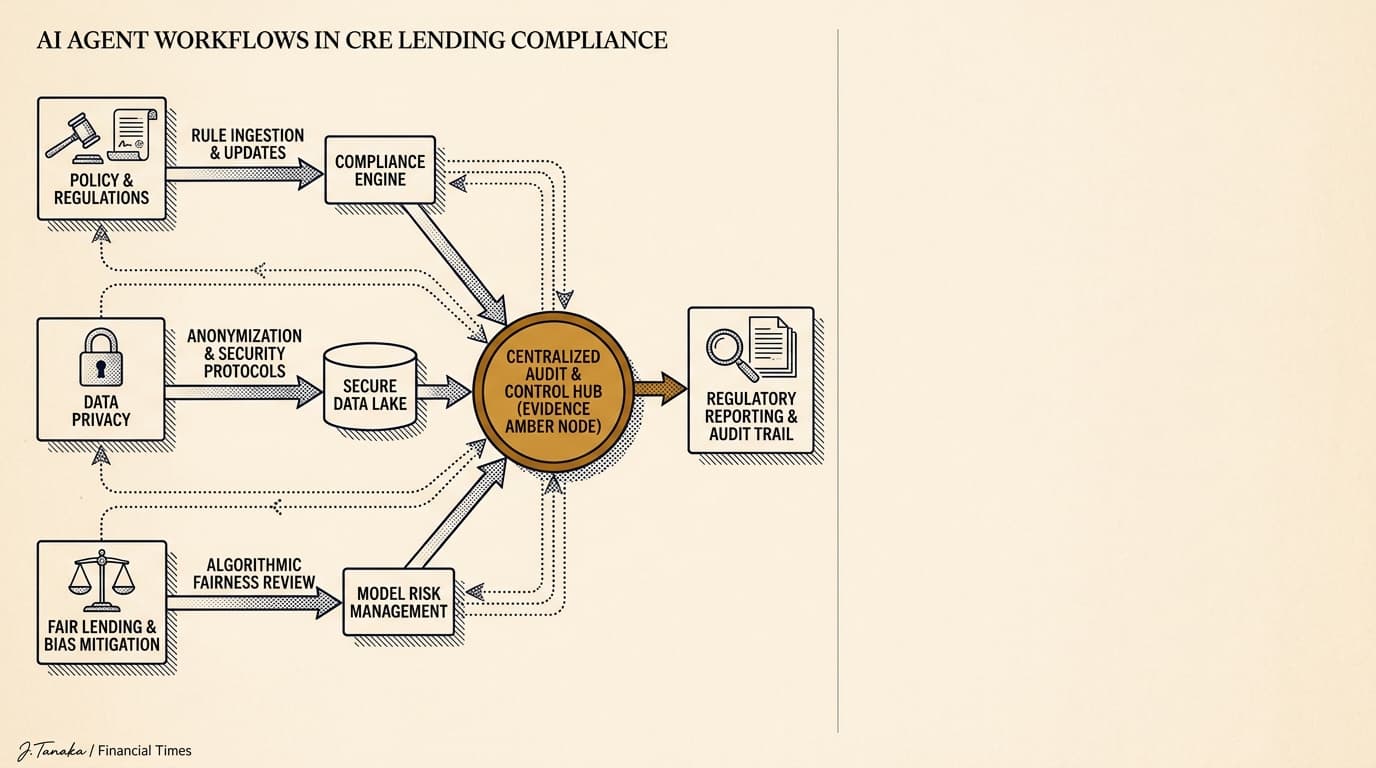

AI agents in credit workflows need to stay within documented control boundaries, not outside them. For private CRE lenders, that means tying each agent action to lending policy, privacy requirements, fair lending rules, recordkeeping obligations, and human review standards before rollout.

The Consumer Financial Protection Bureau has made one point unmistakably clear: if a creditor uses complex technology, the creditor still owns the compliance risk. Predictive models, automation, advanced analytics — none of that changes the duty to follow federal consumer financial law. In private commercial real estate lending, the legal details shift by product, state, borrower type, and lender structure, but the operational rule does not. An AI agent does not take responsibility off the lender's desk. Policy compliance, privacy controls, fair lending review, and auditability still sit with the institution.

This article lays out how to govern ai agents for cre lending compliance before they touch borrower intake, origination, underwriting support, servicing, or payoff workflows. The focus is practical: approval limits, data handling, explainability, testing, escalation, and evidence retention. These are the controls compliance and operations teams actually need in 2026.

Key Takeaways

- AI agents in lending should be treated as controlled workflow actors, with defined permissions, escalation rules, and retained logs for every material action.

- Privacy controls should separate public property data, borrower-provided information, and nonpublic personal information, with role-based access and vendor restrictions documented before launch.

- Fair lending review should start with data inputs, proxy effects, exception handling, and adverse action support, not just model accuracy.

- Audit readiness comes down to retained evidence: prompts, source documents, decision rules, overrides, timestamps, user approvals, and version history.

- The safest early deployments are bounded tasks like document routing, covenant ticklers, and payoff statement assembly support, not open-ended credit decisions.

AI agents for CRE lending compliance: what the control framework must do

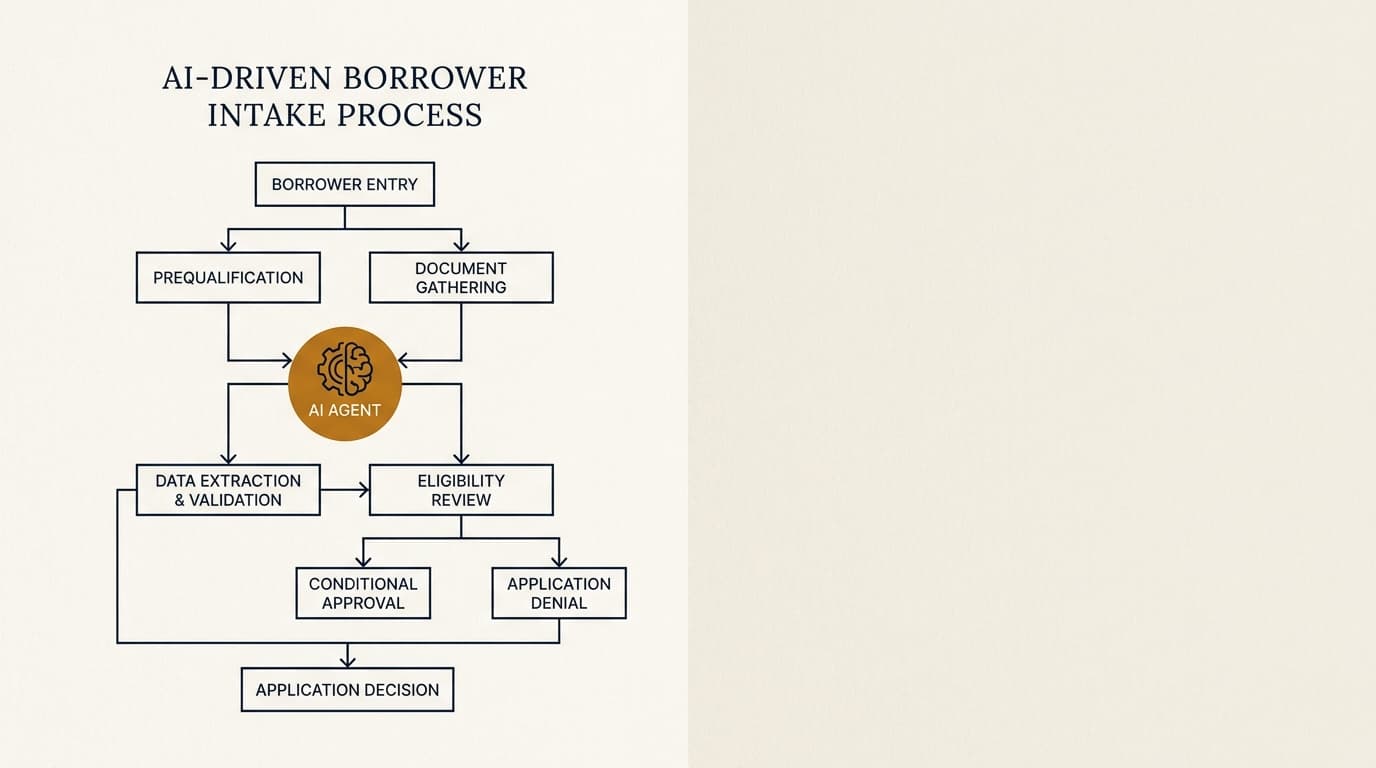

An AI compliance framework for CRE lending should say four things clearly: what the agent can access, what it can produce, what it cannot decide, and when a human has to review or approve the result. If a lender cannot answer those questions in writing, it is not ready to put an agent inside a credit workflow.

For most private CRE lenders, the goal is narrower than abstract “AI governance.” The real goal is to let an agent handle specific work inside existing policy limits: collect documents, classify files, compare borrower submissions against checklists, prepare payoff inputs, draft exception memos, or spot missing data for human review. If the broader operating model is still under review, the cluster’s pillar page on ai agents for private commercial real estate lending covers use cases, workflow design, ROI, and implementation risk at the portfolio level.

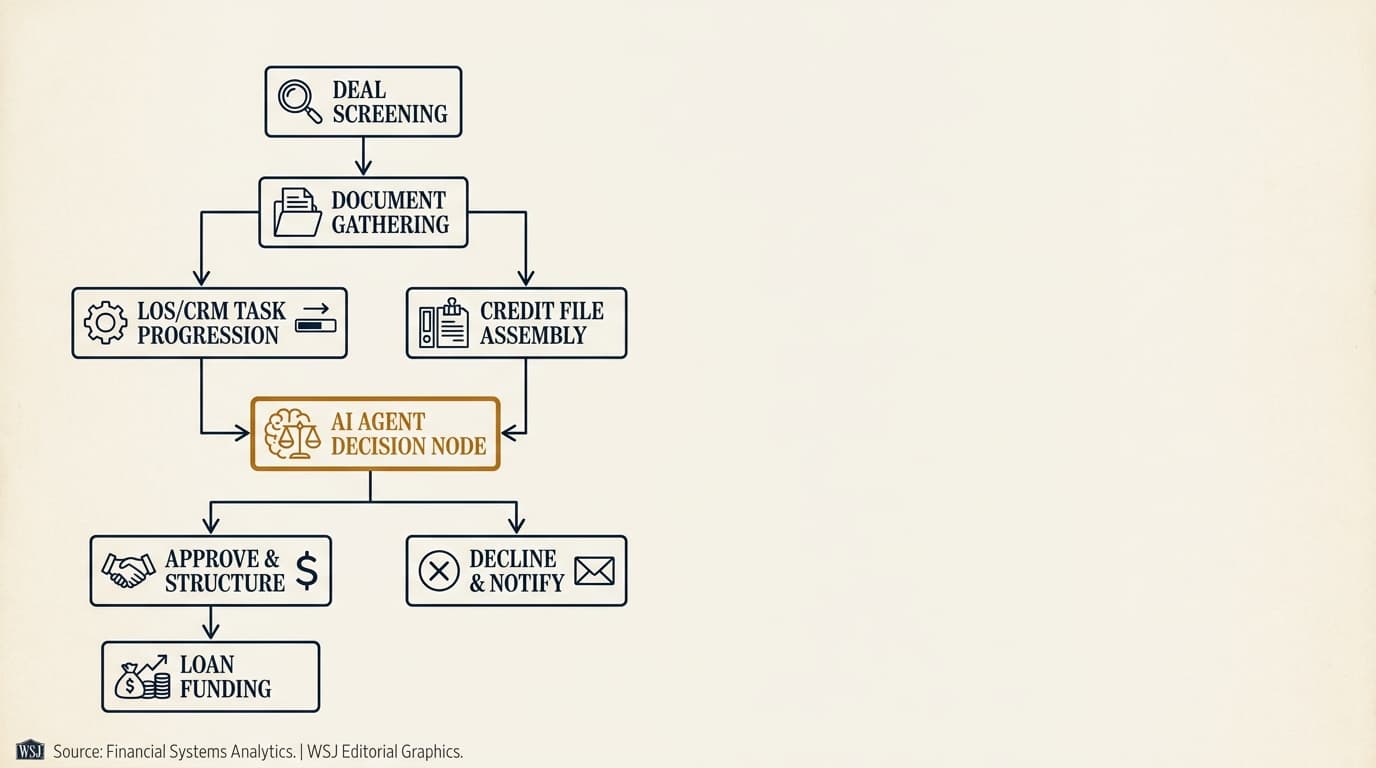

A workable control framework usually has five layers:

- Use-case approval: define the exact task, business owner, compliance owner, system dependencies, and prohibited actions.

- Data governance: map every data element the agent may ingest, generate, store, and transmit.

- Decision governance: separate assistive outputs from binding lending decisions, pricing, and exception approvals.

- Monitoring and testing: measure accuracy, error rates, override rates, drift, and policy exceptions.

- Audit evidence: retain logs and versioned documentation that can stand up to internal audit, exam requests, and litigation holds.

That framework matters because AI agents do more than generate text. They can retrieve information, call systems, trigger tasks, send communications, and create records. Each action creates its own control problem.

Why AI governance in lending is different from ordinary workflow automation

Lending workflows combine judgment, regulated data, written credit policy, and long retention periods. That makes AI agent governance meaningfully different from ordinary back-office automation.

The CFPB’s circular on adverse action notification requirements makes clear that creditors cannot dodge legal duties by relying on algorithms that are hard to explain. The Federal Reserve’s guidance on model risk management says governance, validation, and controls should match the model’s use and materiality. The FTC’s guidance on artificial intelligence and algorithms tells firms to test for discriminatory outcomes and back up claims about system performance.

For CRE lenders, three differences matter most:

- Credit decisions create legal records. Recommendations, approvals, declines, stipulations, exception memos, and payoff figures may all be reviewed later.

- Input data is messy and sensitive. One workflow can pull in leases, rent rolls, guarantor financials, entity documents, emails, and third-party reports.

- Human judgment is still doing the real work. Even when a product sits outside parts of the consumer regime, policy exceptions, sponsor strength, collateral condition, and deal structure still require documented human review.

That is why the right comparison is not generic robotic process automation. It is controlled decision support inside a documented lending process. Teams planning workflow-level deployment should also review the separate guidance on cre loan origination workflow ai agent design, because the control points need to sit at each handoff, not just at final approval.

Lending policy controls for AI agents in CRE credit workflows

AI agents should map directly to written lending policy, credit administration standards, and delegated authority limits. If a workflow step does not exist in policy, the agent should not be doing it unless the procedure has been updated and documented.

The first control question is simple: is the agent advisory or determinative? Advisory agents summarize documents, apply checklists, or flag deviations. Determinative agents approve, decline, price, waive, or trigger borrower-facing outcomes without human signoff. Most private CRE lenders should keep agents in the advisory bucket for material credit decisions, even if the technology can automate more. Just because the system can do it does not mean it belongs in production.

Policy mapping by workflow stage

Each workflow stage has its own compliance profile, and the policy map should reflect that. A single all-purpose “AI policy” is usually too vague to help.

| Workflow stage | Permitted AI agent activity | Higher-risk activity | Required control |

|---|---|---|---|

| Borrower intake | Checklist matching, missing-item follow-up drafts, file routing | Eligibility determinations without human confirmation | Manual approval before any decline or disqualification |

| Origination | Term sheet comparison, exception flagging, covenant checklist prep | Pricing changes or structure changes triggered automatically | Delegated authority check and approver log |

| Underwriting support | Data extraction, ratio calculation support, inconsistency flags | Autonomous risk grade assignment | Analyst review and override capture |

| Servicing | Payment exception routing, document ticklers, payoff package assembly | Borrower notices with legal effect sent automatically | Template approval and release controls |

| Portfolio monitoring | Covenant breach alerts, maturity reminders, watchlist summaries | Default classification without review | Second-line escalation rules |

In practice, the policy document should name the agent-enabled tasks, define prohibited actions, identify the system of record, and set approval thresholds. A lender already building front-end automation should align that map with its design for ai agents for cre loan origination so the compliance boundary holds from intake through closing.

Delegated authority and exception controls

Delegated authority schedules should apply to AI-assisted outputs the same way they apply to manual work. If a credit officer needs approval to waive a DSCR minimum or amend reserve requirements manually, the system cannot let an agent recommend or implement that action outside the same threshold structure.

Two controls matter most:

- Exception tagging: any output that departs from policy should be labeled as an exception and routed to the authorized approver.

- No silent overrides: if a human changes an agent recommendation, the system should retain both the original output and the final approved action, along with a reason code.

Those controls protect auditability. They also make monitoring much more useful, because they show where the agent consistently overflags or underflags issues.

Privacy and data governance requirements for AI agents

Privacy compliance for AI agents starts with data inventory, not model selection. A lender needs to know exactly which borrower, guarantor, tenant, and property data enters the workflow, where it is processed, whether it is retained, and which vendor or subprocessor can access it.

The FTC Safeguards Rule requires covered financial institutions to maintain an information security program with administrative, technical, and physical safeguards. Regulation B and Regulation Z, where applicable, require records to be kept in a way that supports notices and compliance review. The NIST Privacy Framework says organizations should define data-processing purposes, govern data actions, and manage privacy risk across the data lifecycle.

What data should be restricted

Not every AI agent should see every piece of CRE lending data. The safest setup applies least-privilege access by task.

- Public or low-sensitivity data: property address, parcel identifiers, public record filings, market rent comps, zoning references.

- Confidential business data: rent rolls, operating statements, borrower entity structure, lease abstracts, sponsor financial statements.

- Nonpublic personal information: guarantor tax returns, bank statements, Social Security numbers, dates of birth, personal contact details.

- Potentially sensitive characteristics or proxies: demographic indicators, protected-class proxies, and free-text notes that could create fair lending issues.

An intake-routing agent may need document names and completeness signals, but not full tax return content. A payoff statement assembly agent may need loan balance history and fee data, but not broad access to unrelated borrower files. Where agents are used to read source documents, lenders should separately control extraction and review, as described in the related page on ai agents for cre document analysis.

Vendor and retention controls

Third-party AI deployment creates vendor-management issues that go beyond a standard SaaS review. The lender should document whether customer data is used for model training, whether prompts are retained, whether subprocessors are involved, which geographic regions host the data, and how deletion requests are handled.

A practical vendor checklist should include:

- Confirm whether the provider contract bars training on customer content by default or by election.

- Document subprocessor lists and change-notification procedures.

- Set retention periods for prompts, outputs, uploaded files, and application logs.

- Require role-based access control, audit logging, encryption in transit and at rest, and incident-notification obligations.

- Test redaction and masking for identifiers before production deployment.

For lenders operating across states or offering consumer-adjacent products, privacy review may also need to account for state-law requirements and borrower notice language. That review should happen before any live data touches the system.

Fair lending expectations: where compliance risk appears first

Fair lending risk in AI systems usually shows up in data selection, proxy variables, and exception handling before it shows up in top-line model accuracy. A system can look statistically accurate overall and still create serious compliance problems if it relies on variables or patterns that produce disparate outcomes or weak explanations.

The DOJ’s summary of the Equal Credit Opportunity Act states that creditors may not discriminate in any aspect of a credit transaction on prohibited bases covered by the statute. The CFPB’s fair lending resources point institutions to discrimination risks in underwriting, pricing, redlining, and servicing. The FFIEC’s fair lending examination procedures tell examiners to review policies, pricing, exceptions, and comparative treatment patterns.

Where AI agents can create fair lending risk

The highest-risk points are often not the obvious ones. In practice, compliance teams should test at least these scenarios:

- Lead or intake triage: an agent prioritizes files based on language patterns, geography, or sponsor descriptors that may correlate with protected classes.

- Document deficiency follow-up: borrowers get different levels of help or different response times based on relationship history or communication style.

- Exception memo drafting: the system frames similarly situated borrowers differently, which can affect committee treatment.

- Pricing support: the system recommends spreads or fees using historical approvals that may carry forward earlier discretionary bias.

- Servicing escalation: payment issues or covenant breaches are escalated unevenly across segments.

That is one reason many lenders keep AI out of direct decisioning and use it instead for bounded support in ai agents for cre underwriting or servicing review, where a human still evaluates the flagged issue against documented policy.

Adverse action and explanation quality

If a workflow can contribute to a decline or materially less favorable terms, the lender should test whether the system can support a specific, accurate explanation of the principal reasons. Vague explanations like “algorithmic risk factors” are not usable compliance records.

A sound control design requires the agent to map outputs back to observable facts and approved policy rules, for example debt service coverage below the documented threshold, unsupported liquidity, missing guarantor financials, or tenant concentration above policy limits. If the system cannot tie its output to documented reasons, it should not be driving that stage of the workflow.

Audit trails, model documentation, and evidence retention

An AI agent is only auditable if a reviewer can reconstruct what data it used, what instructions governed it, what output it produced, who approved the result, and what changed afterward. Keeping only the final memo or communication is not enough.

Federal Reserve SR 11-7 says effective challenge, ongoing monitoring, and outcomes analysis require documentation that matches the model’s complexity and use. The NIST AI Risk Management Framework calls for documentation of system purpose, limitations, performance, and risk treatments.

A lender should retain, at minimum:

- Input documents and source-system references used by the agent

- Prompt templates, business rules, and model or workflow version numbers

- Output artifacts, including intermediate summaries if they affect the outcome

- Human approvals, overrides, and reason codes

- Timestamps, user IDs, and system action logs

- Testing results, validation reports, and change-management records

For audit teams, the test is simple: can the institution replay a specific file from intake through approval, decline, servicing action, or payoff statement support and explain every AI-generated step? If not, the control environment is incomplete.

A practical governance framework for approving AI agents

AI agent approval should follow a documented launch process with signoff from compliance, operations, technology, and the business owner. Informal pilots are where some of the biggest evidence gaps start, because teams skip data mapping, use-case scoping, and retention decisions.

The following workflow is workable for most private CRE lenders:

- Define the use case. State the business objective, systems touched, data used, outputs produced, and prohibited actions.

- Classify the data. Identify confidential business data, nonpublic personal information, and any fields that should be masked or excluded.

- Assign the decision boundary. Specify whether the agent is assistive only or whether it can trigger downstream actions.

- Map the policy rules. Tie every material output to a documented policy, procedure, or approved template.

- Set escalation rules. Require human review for exceptions, uncertain outputs, missing data, or policy deviations.

- Test before launch. Run historical-file testing for accuracy, consistency, fairness risk indicators, and record retention.

- Approve the release. Capture signoff from the business owner, compliance, information security, and technology.

- Monitor in production. Track override rates, error rates, incident volume, and changes in borrower outcomes.

This process is slower than switching on a generic AI tool. It is also how you avoid a common failure mode: a helpful workflow assistant quietly turning into an undocumented decision engine.

Decision framework: which CRE lending tasks are suitable for agent automation

The best first deployments are tasks with heavy documentation, repeatable rules, and limited legal finality. The worst first deployments are tasks where the system must make or materially shape a credit judgment that cannot be clearly explained later.

| Task type | Suitability | Why | Control condition |

|---|---|---|---|

| Document completeness review | High | Checklist-driven and easy to test | Human confirms any ineligibility outcome |

| Data extraction from standard forms | High | Objective fields with measurable accuracy | QC sampling and confidence thresholds |

| Payoff statement preparation support | High | Rule-based inputs and strong audit need | System-of-record reconciliation before release |

| Exception memo drafting | Moderate | Useful, but framing can affect decisions | Reviewer edits and retained draft history |

| Risk grade recommendation | Moderate to low | Can embed bias and produce weak explanations | Full validation and mandatory human signoff |

| Autonomous decline or pricing | Low | Highest compliance and explainability risk | Usually unsuitable for early deployment |

The practical lesson is that suitability depends less on whether a task looks “complex” and more on whether it is bounded, reviewable, and reversible. A sophisticated document-assembly task may be safer than a simple prioritization rule if the latter changes who gets attention or what terms they receive.

Common failure points and edge cases compliance teams should test

Most AI control failures show up in exceptions, not standard files. Testing should include incomplete submissions, unusual collateral, amended terms, transferred servicing histories, and manually negotiated structures.

Edge cases often missed in pilots

Pilots usually overrepresent clean files and cooperative users. Production does not.

- Conflicting source data: the rent roll, T-12, and narrative memo disagree, and the agent does not escalate the conflict.

- Legacy policy versions: the agent uses an outdated threshold after a credit policy update.

- Template drift: legal or servicing notice language changes, but the agent keeps using an older template.

- Transferred or acquired portfolios: servicing histories are incomplete, which leads to incorrect flags or payoff components.

- Ambiguous guarantor data: individual and entity obligations are mixed in source documents, creating explanation problems.

For post-close operations, these issues often surface first in payoff, servicing, and covenant administration rather than at origination. Teams extending governance into those functions should review the related controls discussed in the page on ai agents for loan servicing.

What good testing looks like in practice

Good testing uses a retrospective file set with known outcomes, policy exceptions, and document defects. It measures not just accuracy, but whether the system escalated uncertainty, preserved evidence, and produced outputs a reviewer could still explain months later.

A workable test set might include:

- Approved loans with no exceptions

- Approved loans with multiple policy exceptions

- Declined files with clear principal reasons

- Files missing key documents

- Servicing files with fee disputes or payment reversals

- Payoff scenarios involving default interest, extension fees, or escrow adjustments

That kind of testing gives you something a government source page cannot: a view of where an operational AI system actually breaks inside a real credit file population.

Frequently Asked Questions

Can an AI agent approve or decline a CRE loan application?

An AI agent can technically score or classify an application, but most private CRE lenders should keep approval and decline authority with human approvers. If an AI output contributes to a decline or materially less favorable terms, the lender needs clear documentation of principal reasons, policy support, and retained review records.

What records should be retained for AI agents in lending workflows?

At minimum, retain source documents, prompts or rule templates, model or workflow version numbers, outputs, approvals, overrides, timestamps, and change logs. Retention periods should match the institution's recordkeeping schedule, litigation hold practices, and any product-specific regulatory requirements that apply to the file.

Do privacy requirements change if the lender uses a third-party AI vendor?

Yes. Third-party deployment adds vendor-management, contract, subprocessor, retention, and information-security issues. The lender should document whether uploaded data is retained, whether it is used for training, where it is processed, and how deletion and incident notification are handled before production use.

How does fair lending review differ for commercial real estate loans versus consumer lending?

The legal analysis can differ by product type, borrower structure, guarantor involvement, and jurisdiction, so lenders should review the applicable federal and state framework with counsel. Operationally, the control logic is the same: test data inputs, review proxy effects, monitor exceptions, and make sure any adverse outcome can be explained with specific documented reasons.

Are compliance expectations different by state or lender footprint?

Yes. State privacy laws, licensing regimes, record-retention rules, and borrower notice requirements vary by jurisdiction and business model. A multistate lender should map AI-enabled workflows to the states where it lends and services loans, then confirm whether additional state-specific contract terms, disclosures, or data-handling restrictions apply.