AI Agents for Private CRE Lending in 2026

Private CRE lenders are starting with AI agents in document-heavy work: intake, document review, servicing requests, and portfolio surveillance. This guide lays out where those agents fit across the lending lifecycle, what they should and should not automate, how to measure ROI, and which controls matter if you want an auditable system in 2026.

Private debt funds and nonbank CRE lenders still burn an enormous amount of time on PDFs, spreadsheets, emails, and servicing requests. AI agents for private commercial real estate lending are getting traction because they can take on the repetitive parts of that work intake, document triage, covenant checks, payoff coordination, portfolio monitoring while humans keep control of credit calls and compliance-sensitive decisions. This article lays out where these systems actually fit, what rollout looks like in the real world, how to measure ROI, and which controls separate useful automation from a black box nobody should trust.

Key Takeaways

- AI agents create the fastest value in high-volume, document-heavy CRE lending work like borrower intake, rent roll extraction, servicing requests, covenant checks, and payoff statement prep.

- According to the National Institute of Standards and Technology AI Risk Management Framework, higher-risk AI uses need documented governance, monitoring, and human oversight. That maps cleanly to lending workflows tied to decisions, compliance, and borrower communications.

- According to the Consumer Financial Protection Bureau guidance on adverse action notices, lenders still need to explain decision reasons when credit outcomes are affected. That puts a hard limit on opaque automation.

- According to the Federal Reserve supervisory guidance on model risk management, lenders using analytical models need validation, change management, and ongoing performance review. Those same controls apply to AI-assisted underwriting and monitoring.

- When choosing a vendor, demo quality matters less than source traceability, exception handling, integrations, permissioning, and whether every output can be traced back to the loan file.

What AI agents for private commercial real estate lending actually do

AI agents for private commercial real estate lending are workflow systems, not just chat boxes with a slick UI. In practice, they take in documents and messages, apply instructions and business rules, call connected systems, generate outputs, and send exceptions to staff.

A useful definition is narrower than what most vendors pitch. In CRE lending, an AI agent is usually a workflow-level system that can read incoming borrower or asset data, classify documents, extract fields, compare those fields to lender rules, generate summaries or draft calculations, and trigger follow-up actions in a loan origination system, servicing platform, customer relationship management system, or document repository.

That distinction matters. Private lenders usually do not need fully autonomous decisioning, and frankly, they should be skeptical of anyone selling it. What they need is controlled automation in the places where staff waste time chasing missing documents, reconciling borrower numbers, checking covenant deadlines, or assembling payoff data across multiple systems. The real question is not whether an agent can “underwrite a deal.” It is whether it can cut manual work without making the process harder to audit.

Across the market, the best early use cases usually share four traits:

- Inputs arrive in inconsistent formats such as PDFs, Excel files, scanned statements, and email threads.

- The workflow follows repeatable lender policies or checklists.

- Errors are expensive but still detectable through source review.

- The task eats up analyst or servicing time without requiring broad strategic judgment.

That is why lenders are moving first into ai agents for borrower intake, ai agents for cre document analysis, ai agents for loan servicing, and ai agents for portfolio monitoring, not fully automated credit approvals.

Where AI agents fit across the CRE lending lifecycle

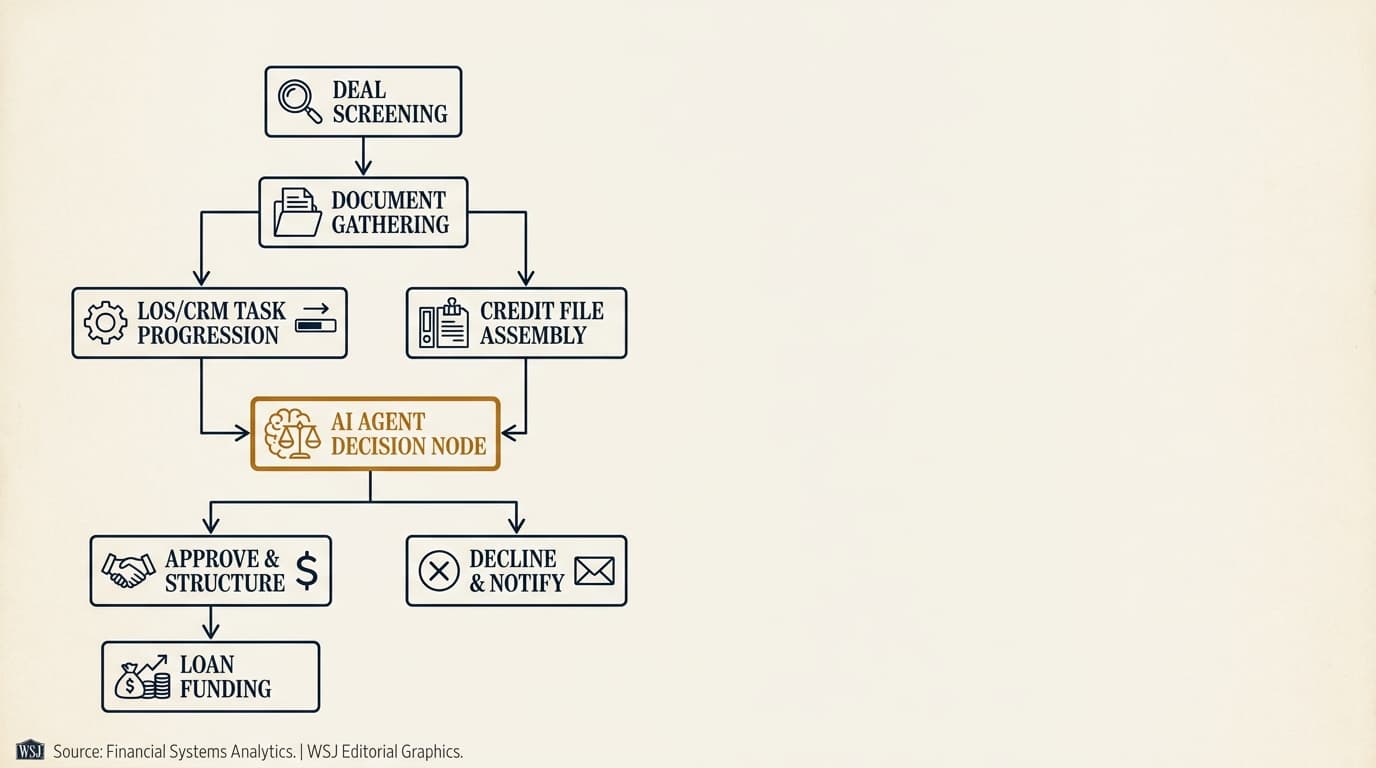

Private CRE lending has enough variation that no single automation map works for every shop. But the lifecycle is stable enough that most deployments fall into five operating layers: intake, origination, underwriting, servicing, and portfolio oversight.

The table below shows where lenders can expect realistic near-term automation and where human review still needs to stay in the loop.

| Lifecycle stage | Typical agent tasks | Human role | Primary risk |

|---|---|---|---|

| Borrower intake | Collect documents, classify files, detect missing items, send follow-ups | Review exceptions, confirm borrower identity, resolve edge cases | Missing or misclassified documents |

| Origination | Create records, route submissions, populate fields, assign tasks | Set deal strategy, approve handoffs, manage broker relationships | Workflow errors and duplicate records |

| Underwriting | Extract rent roll and T-12 data, flag DSCR and LTV exceptions, summarize risks | Interpret collateral quality, sponsor strength, market risk, structure | False confidence from incomplete or inaccurate data |

| Servicing | Process requests, draft payoff packages, monitor payment exceptions, route notices | Approve borrower-facing outputs, handle disputed balances | Incorrect balances, communication mistakes, compliance gaps |

| Portfolio monitoring | Track maturities, covenant deadlines, watchlist triggers, reporting gaps | Decide interventions, restructure terms, escalate problem loans | Missed triggers or poor exception triage |

Each stage needs different controls. An intake agent can usually run with more autonomy because the outputs are administrative and easy to verify. A servicing or underwriting agent needs tighter controls because its outputs may affect financial terms, borrower communications, or decisions that have to be explained later.

For a more detailed workflow view, lenders evaluating front-end operations should review ai agents for cre loan origination and cre loan origination workflow ai agent. Credit teams looking at decision support should review ai agents for cre underwriting and ai underwriting for private lenders.

Borrower intake and document collection use cases

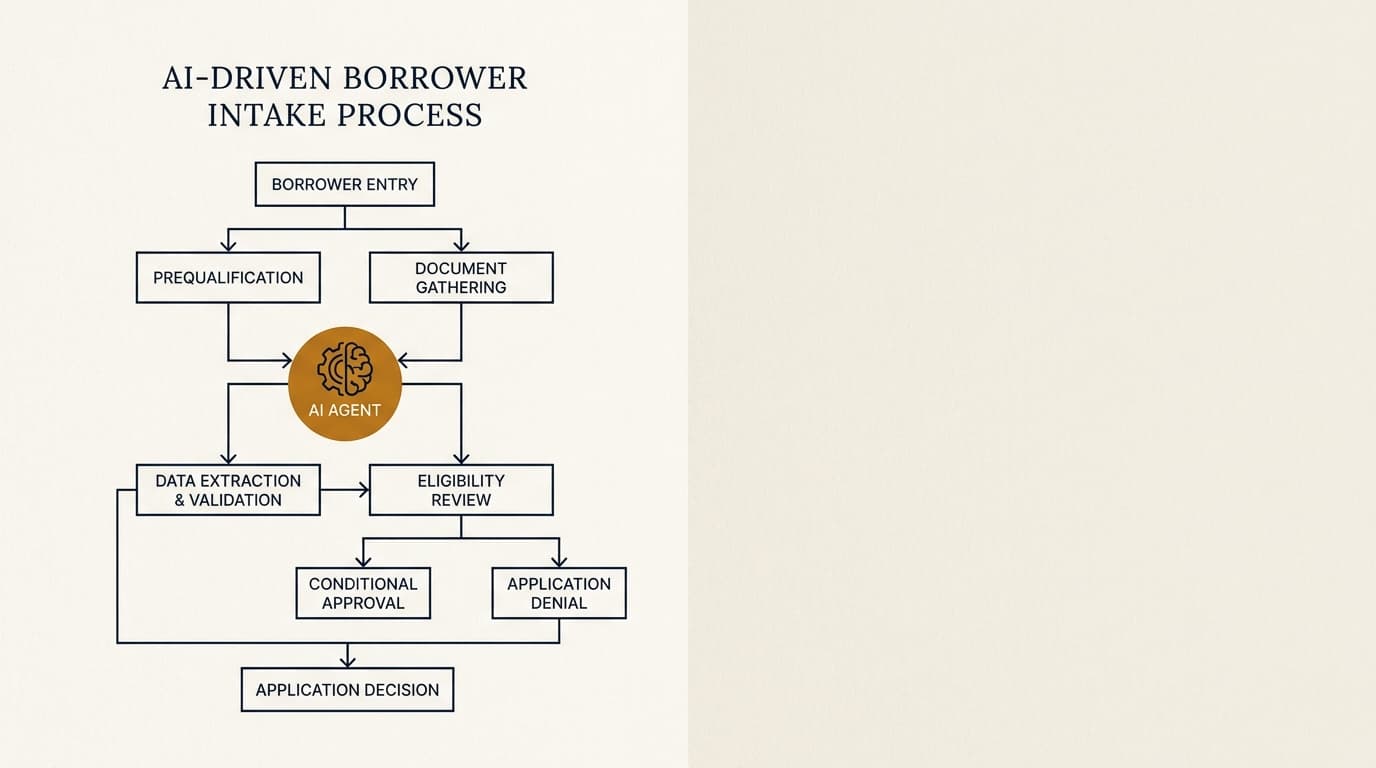

Borrower intake is usually the cleanest place to start. The work is repetitive, rules-based, and easy to measure. An intake agent can compare submitted files against a program checklist, identify gaps, standardize naming, and draft borrower follow-ups without touching credit policy.

Private lenders usually get borrower packages through email, shared drives, broker submissions, and improvised portals. One deal can include organizational charts, schedules of real estate owned, personal financial statements, rent rolls, trailing 12-month operating statements, appraisals, insurance certificates, and entity formation documents. The problem is not just collecting all of that. It is getting it into a consistent, usable format.

An intake agent can usually do four things well:

- Classify incoming documents by type and entity.

- Check completeness against product-specific requirements.

- Extract key metadata such as borrower name, guarantor, property address, statement date, and file period.

- Draft targeted deficiency requests instead of vague “missing items” emails.

This is where many lenders see the first clear win. Manual intake often turns into multiple back-and-forth cycles because staff ask for broad categories of information instead of pointing to what is actually missing or out of date. An agent that can identify that the rent roll is two months stale, the insurance certificate expires before the expected closing, or one guarantor statement is unsigned can cut cycle time before underwriting even starts.

According to the Federal Financial Institutions Examination Council statement on prudent use of artificial intelligence and machine learning, governance around data quality, third-party risk, and outcome monitoring still matters even when AI is used in operational processes rather than direct credit decisioning. That applies here. Intake automation is lower risk than credit automation, but it is not risk-free.

Lenders that want a tighter look at this area should see ai agents for borrower intake and ai agents for borrower communication.

Document analysis in practice

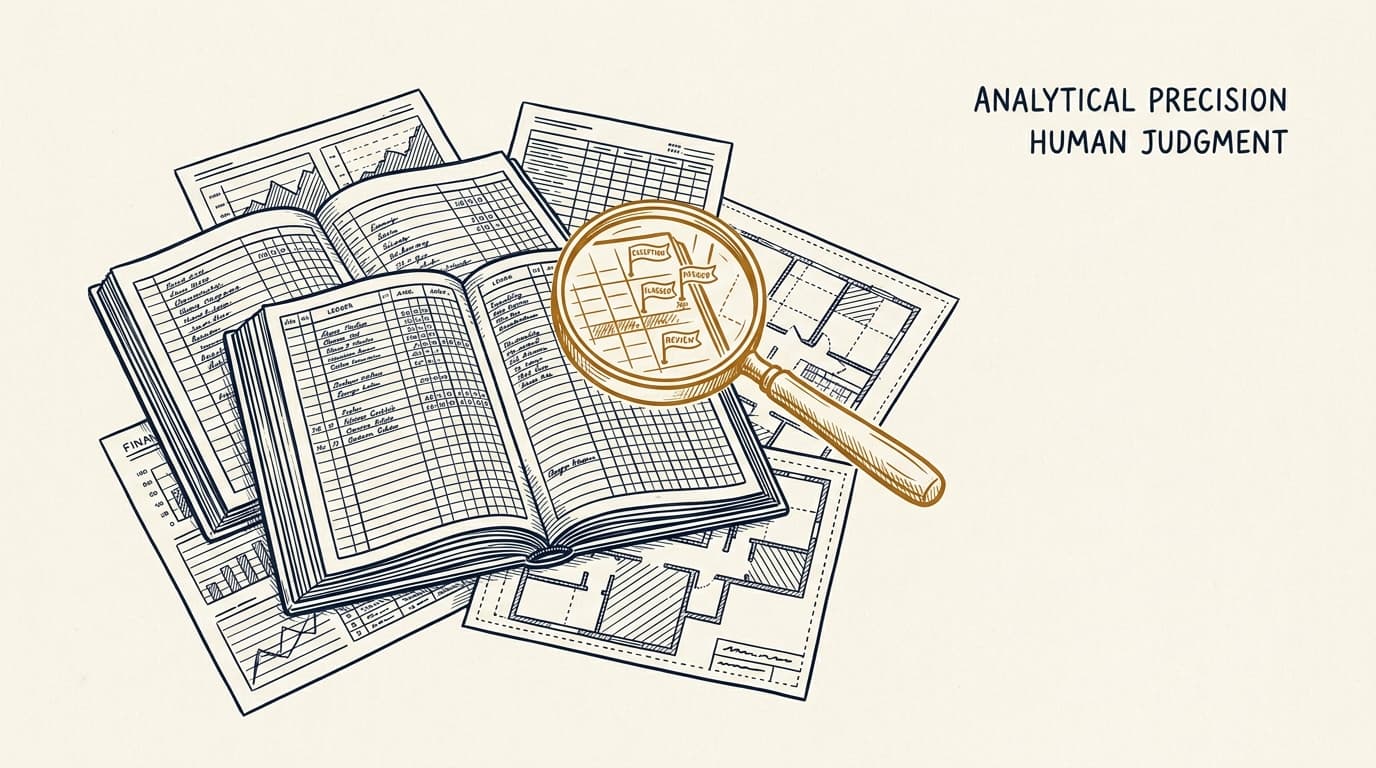

CRE document analysis sits between intake and underwriting because extracted data becomes underwriting input. The value is highest when the system preserves source references field by field, instead of handing back a clean-looking spreadsheet with no way to check where anything came from.

For rent rolls and operating statements, the standard should be simple: every extracted field should tie to a source page, source row, and confidence indicator. If a vacancy figure, lease expiration date, or base rent amount cannot be traced back to the original file, the extraction is not reliable enough for credit use.

This matters because CRE documents are rarely standardized. One sponsor may send a rent roll with unit-level lease dates and concessions. Another sends a property management export full of abbreviations that need interpretation. A strong agent can normalize those formats. A weak one can hide mistakes behind a polished summary, which is worse than no automation at all.

Teams evaluating this layer should review ai agents for cre document analysis and ai document extraction for rent rolls.

Origination and underwriting use cases

Underwriting agents work best as decision-support tools, not autonomous credit officers. They can surface discrepancies, calculate ratios, summarize collateral and sponsor information, and draft approval memos. Final structure and approval should stay with lending staff.

Private CRE underwriting mixes hard numbers with judgment about business plans, sponsorship, market execution risk, and collateral liquidity. That combination makes full automation a bad fit. It still leaves a lot of room for AI-assisted work, though.

Typical underwriting tasks agents can support include:

- Extracting property cash flow inputs from T-12s, rent rolls, bank statements, and borrower narratives.

- Reconciling inconsistencies between borrower-provided numbers and third-party reports.

- Calculating debt service coverage ratio, debt yield, and loan-to-value using lender-specific assumptions.

- Flagging missing reserves, rollover concentration, tenant exposure, declining occupancy, or unsupported income adjustments.

- Drafting first-pass investment committee memos with linked citations to source materials.

Where these systems usually break down is not the math. It is assumption control. If one analyst calculates DSCR from underwritten NOI with stressed expenses and another uses in-place trailing figures, the problem is policy consistency, not arithmetic. The agent helps only if it applies the lender’s rules in a way that is documented and repeatable.

According to the Federal Reserve SR 11-7 guidance on model risk management, institutions should maintain validation, governance, and use controls for models that influence business decisions. SR 11-7 was not written for modern generative AI, but the core ideas still fit underwriting agents directly: conceptual soundness, ongoing monitoring, and outcome analysis.

For deeper coverage, keep it focused here and link out: ai agents for cre underwriting, ai underwriting for private lenders, and ai agents for dscr and ltv analysis.

A decision framework for underwriting automation

The right automation boundary depends on whether the task is deterministic, reviewable, and material to credit approval. In practice, lenders should automate calculations and evidence gathering first, automate risk flagging second, and keep final structure and exceptions under human control.

| Underwriting task | Automate now | Human review required | Reason |

|---|---|---|---|

| Document intake and indexing | Yes | Exception only | Rules-based and easy to verify |

| Field extraction from rent rolls and T-12s | Yes, with QC | Spot-check and thresholds | High value if source-linked |

| DSCR and LTV calculations | Yes, if assumptions locked | Review assumptions and overrides | Formulaic but sensitive to assumptions |

| Risk flag generation | Yes | Interpret materiality | Useful for triage, not final judgment |

| Credit approval recommendation | Limited | Required | Needs context, policy interpretation, and explanation |

| Exception approval | No | Required | Policy and governance issue |

That is the practical line between useful augmentation and avoidable risk. If the task can be checked against source data and policy logic, an agent can save time. If it requires balancing sponsor quality, market execution, and structure trade-offs, staff should stay accountable.

Servicing, payoff, and post-close operations

Post-close servicing is one of the most appealing areas for AI deployment because the requests are frequent, rules-based, and time-sensitive. Servicing teams deal with payment questions, reserve draws, insurance updates, borrower notices, assumption requests, extension packages, covenant reporting, and payoff statements across multiple systems.

Here, agents are less about prediction and more about orchestration. They gather records from servicing systems, document repositories, and communication logs, identify what the borrower requested, determine the right workflow, and draft the next step.

Common servicing use cases include:

- Classifying incoming borrower requests and routing them to the right queue.

- Monitoring payment exceptions, unapplied funds, short pays, and failed ACH activity.

- Checking whether reserve draw requests include required invoices, lien waivers, and inspection support.

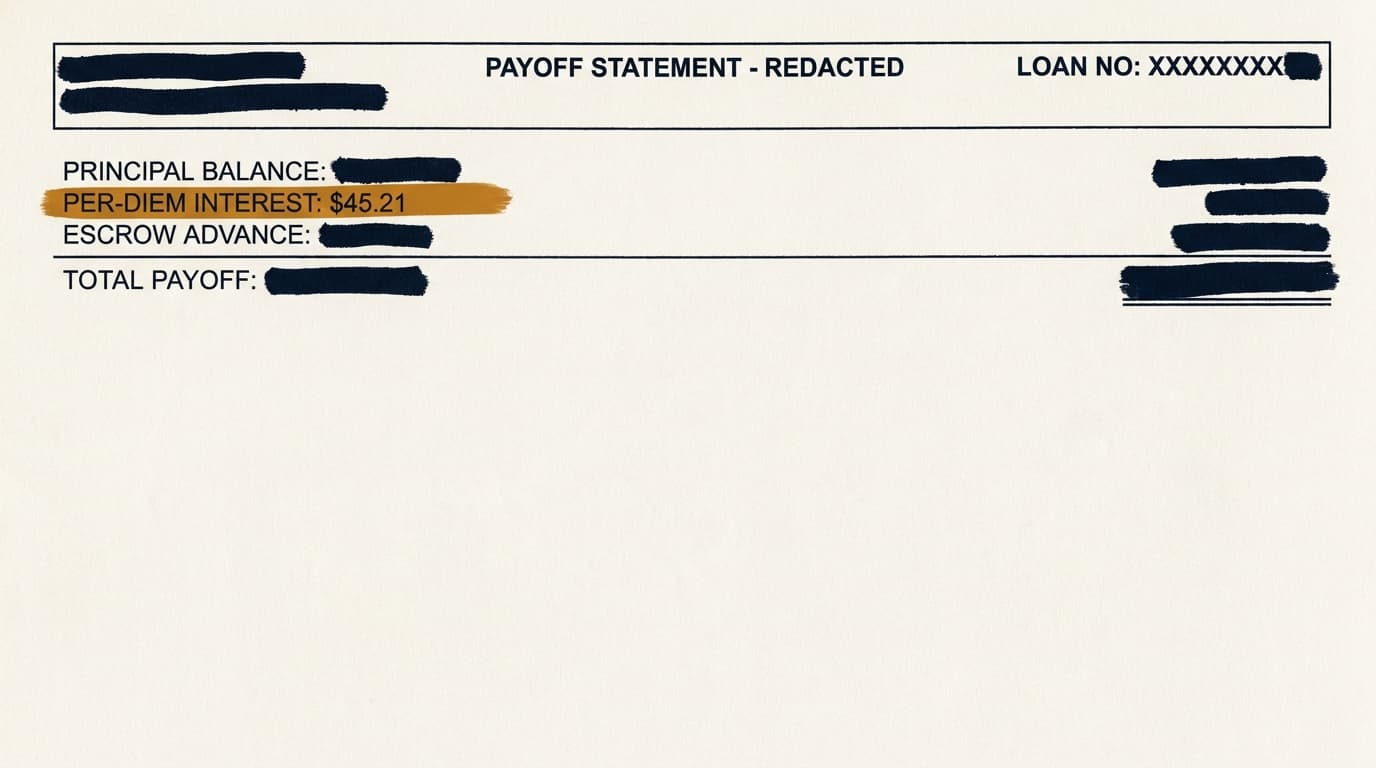

- Assembling payoff inputs from principal balance, accrued interest, fees, default interest status, and per diem calculations.

- Drafting borrower-facing notices and internal servicing summaries for review.

This is also where auditability stops being optional. A payoff statement is not just a customer service document. It is a financial representation that may be disputed, relied on in a closing, or reviewed later in detail. The standard should be full source traceability: every fee, balance component, and date assumption needs to tie back to servicing records and governing loan documents.

That is why lenders evaluating post-close automation should look closely at ai agents for loan servicing and ai for loan servicing payment management. A system that drafts servicing outputs quickly but cannot show its work usually creates rework, not savings.

Portfolio monitoring and exception management

Portfolio monitoring agents tend to be most useful after closing because loan portfolios generate recurring data and recurring deadlines. An agent can track maturities, covenant due dates, insurance expirations, borrowing base reports, financial statement deadlines, and watchlist triggers more consistently than a process built on email and spreadsheets.

Many private lenders still run post-close surveillance through some combination of analyst calendars, manual covenant trackers, and servicing notes. That can work at small scale. It gets fragile fast as portfolios grow, especially when a lender manages multiple products, sponsor types, and reporting frequencies.

A monitoring agent can compare incoming borrower submissions against required reporting packages, detect breaches or missing items, and draft internal watchlist summaries. It can also flag concentration risks such as multiple maturities in one quarter, repeated late reporting by a sponsor, or declining collateral performance across a property subtype.

The higher-value use case is not just sending reminders. It is combining operational data and risk signals into an exception queue a portfolio manager can actually work from. For example:

- A bridge loan is 75 days from maturity, extension conditions include updated financials and debt yield tests, and the borrower has not submitted current reports.

- A covenant package was received, but occupancy dropped below the reporting trigger and the debt yield calculation fails under the lender’s policy assumptions.

- An insurance certificate was uploaded, but the additional insured language does not match loan requirements.

Those are good agent tasks because they rely on repeatable rules and produce exceptions someone can act on immediately. More detailed treatment lives in ai agents for portfolio monitoring, ai agents for watchlist and maturity management, and ai agents for loan covenant monitoring.

A practical implementation framework for private lenders

Most private lenders should start with one bounded workflow, one owner, and one measurable operating baseline. The fastest way to waste time is an enterprise-wide pilot with no control owner, no exception design, and no agreed definition of success.

A workable implementation sequence looks like this:

- Map one high-volume workflow end to end, including systems touched, manual steps, review points, and exception types.

- Choose a use case with clear source documents and measurable turnaround time, such as borrower intake, rent roll extraction, servicing request routing, or covenant package review.

- Define the policy logic the agent will follow, including thresholds, required fields, approval limits, and escalation rules.

- Identify systems of record and decide whether the agent can write data back or only propose changes for approval.

- Build a source-traceability standard so every extracted field, ratio, and draft output links to underlying documents or servicing records.

- Set quality thresholds before launch, including acceptable extraction accuracy, exception rates, and turnaround time improvement.

- Run the agent in parallel with staff for a test period and measure variance between agent output and final human-reviewed output.

- Limit production access by role, maintain change logs, and require review for borrower-facing or decision-relevant outputs.

- Review outcomes monthly and retrain or reconfigure only through documented change control.

This is less flashy than the usual full-stack automation pitch, but it is how lending operations actually work. The first goal should be a predictable reduction in manual touches, not vague “transformation.”

Lenders comparing operating models should also review build vs buy ai agents for lending.

How to measure ROI for AI agents in CRE lending

ROI in CRE lending automation usually comes from lower labor per loan, faster cycle times, fewer preventable exceptions, and better post-close follow-up. It almost never comes from replacing underwriters or asset managers outright.

The cleanest way to measure it is at the workflow level. Compare pre-implementation and post-implementation performance inside one process instead of claiming some firm-wide AI return that nobody can really prove. Useful metrics include:

- Average time from initial submission to complete file.

- Number of borrower follow-up cycles per file.

- Analyst time spent on document extraction and reconciliation.

- Servicing request turnaround time.

- Late covenant package rate.

- Exceptions found after initial review versus before.

- Borrower communication response time.

A practical ROI model also needs cost categories vendors tend to downplay. Include software fees, implementation services, integration work, testing time, internal project management, control design, and ongoing human review. If a lender saves 20 analyst hours a week but adds heavy exception review and reconciliation work, labor may have moved around rather than gone down.

One useful way to evaluate use cases is on two axes: manual effort and error cost. High manual effort with low-to-moderate error cost, like intake chasing and document indexing, is usually the best place to start. High manual effort with high error cost, like payoff calculations or covenant breach handling, can still be worth it, but only if traceability and review controls are strong.

| Use case | Manual effort | Error cost | ROI potential | Control intensity |

|---|---|---|---|---|

| Borrower intake | High | Low to moderate | High | Moderate |

| Rent roll extraction | High | Moderate | High | High |

| IC memo drafting | Moderate | Moderate | Moderate | High |

| Payment exception routing | Moderate | Moderate | Moderate to high | High |

| Payoff statement assembly | Moderate | High | High if auditable | Very high |

| Portfolio watchlist monitoring | High | High if missed | High | High |

This kind of scoring is more useful than generic productivity claims because it forces a lender to weigh savings against operational exposure.

Compliance, auditability, and model risk controls

Any AI deployment that influences lending decisions, borrower treatment, or financial outputs needs governance on par with other controlled bank or nonbank workflows. The minimum bar is not whether the system feels innovative. It is whether an auditor, investor, regulator, or litigation team can reconstruct what happened.

Three frameworks matter most here. According to the NIST AI Risk Management Framework, organizations should govern AI use through documented policies, measurement, and lifecycle monitoring. According to the Federal Reserve SR 11-7 guidance, model outputs that influence decisions need validation and controls. According to the Consumer Financial Protection Bureau resources on adverse action notices, creditors still need to provide reasons for certain adverse credit decisions covered by law.

Private CRE lenders are not all supervised the same way, and not every loan or borrower type sits under the same consumer-facing rules. Still, the control principles carry across the sector:

- Document the intended use of each agent.

- Separate automation support from final approval authority.

- Log prompts, rules, versions, overrides, and user actions.

- Retain source documents and link outputs back to them.

- Test for accuracy drift when document formats or workflows change.

- Restrict external communications unless reviewed.

- Assess third-party vendor security and subcontractor exposure.

For compliance-specific design, see ai agents for cre lending compliance and ai agents for kyc and aml in lending.

What auditability looks like in practice

Auditability means more than keeping an activity log. In lending, it means the lender can reconstruct the exact sources, rules, calculations, user interventions, and final outputs tied to a loan event.

An auditable AI-assisted workflow should preserve at least these records:

- The source documents used and the version received.

- The fields extracted from each source and where they were found.

- The policy or calculation rules applied.

- Any confidence scores, missing data flags, or exceptions generated.

- The human reviewer, edits made, and approval timestamp.

- The final borrower-facing or internal output sent.

That standard matters most for servicing outputs such as payoff statements, fee calculations, and default-related notices. These are not disposable workflow artifacts. They are records that may be contested later.

Build vs buy: how lenders should evaluate vendors

Most private lenders should buy before they build unless they have unusual scale, a large engineering team, and stable internal process design. The hard part is rarely model access. It is workflow integration, permissions, audit logging, and keeping the system running in production.

Vendor evaluation should start with operating questions, not architecture slides. A serious review should ask:

- Which systems of record does the product integrate with today?

- Can it show page-level and field-level source citations?

- How are overrides handled and logged?

- What happens when confidence is low or documents conflict?

- Can the lender define its own rules, thresholds, and queue routing?

- Where is data processed and retained?

- What does implementation require from internal operations and IT teams?

- How quickly can workflows be modified when loan products or policies change?

The case for building gets stronger only when a lender has highly differentiated processes, proprietary data advantages, or enough volume to justify long-term ownership. Even then, many teams underestimate the maintenance load. Every new document template, servicing exception type, or policy revision creates more support work.

The dedicated comparison is build vs buy ai agents for lending.

Where AI agents fail in CRE lending

Most failures come from weak workflow design, not from AI in the abstract. Lenders get into trouble when they deploy agents into processes with undefined policies, poor source data, or no clear owner for exceptions.

Common failure patterns include:

- Using extracted values without preserving source references.

- Letting an agent write to core systems without approval gates.

- Assuming one workflow works across bridge, construction, and stabilized loans.

- Relying on generic prompts instead of lender-specific rules.

- Measuring success by demo output quality instead of live exception rates.

- Automating borrower communications without servicing or legal review.

There are also product-specific edge cases. Bridge lending often involves extension options, rehab milestones, reserve mechanics, and sponsor updates that change faster than they do in stabilized permanent loans. That makes rule drift more likely. Lenders with heavy transitional asset exposure should review ai agents for bridge loans before applying one operating model across the whole portfolio.

The core lesson is simple: an agent should enter a workflow only after the lender has defined what “correct” looks like, how exceptions get handled, and who owns the final output.

Frequently Asked Questions

What are AI agents for private commercial real estate lending?

AI agents for private commercial real estate lending are workflow systems that read documents and messages, apply lender rules, generate structured outputs, and route exceptions across intake, underwriting, servicing, and portfolio monitoring. In most private lending environments, they support human teams rather than making final credit decisions on their own.

Which CRE lending workflows are the best starting point for AI agents?

The best starting points are borrower intake, document classification, rent roll and T-12 extraction, servicing request routing, payment exception handling, and portfolio reporting checks. These workflows are repetitive, document-heavy, and easier to audit than final credit decisions or bespoke restructuring work.

How should a private lender measure ROI from AI agents?

Measure ROI at the workflow level using before-and-after metrics such as time to complete a borrower file, analyst hours spent on extraction and reconciliation, servicing turnaround time, covenant reporting timeliness, and exception rates. Include software cost, implementation labor, testing time, and ongoing review in the calculation instead of counting labor savings alone.

Do compliance expectations vary by lender type or location?

Yes. Compliance expectations vary based on lender structure, loan product, borrower type, investor requirements, servicing arrangements, and applicable federal or state law. A private debt fund making business-purpose loans in one state may face a different mix of licensing, servicing, notice, and recordkeeping obligations than a balance-sheet lender or federally supervised institution. Legal review should cover the jurisdictions where loans are originated, serviced, and enforced.

Should lenders build their own AI agents or buy a vendor product?

Most lenders should start with a vendor product if they need faster deployment, prebuilt integrations, and audited workflow controls. Building internally makes more sense when the lender has specialized processes, enough engineering capacity, and a clear long-term case for owning workflow logic and infrastructure. The decision usually turns on integration burden, control requirements, and maintenance capacity more than model performance alone.