AI Agents for CRE Underwriting: Controls and Risks

AI tools for CRE underwriting can pull together borrower, property, and market data much faster than a manual process, but they should support analyst judgment, not make credit decisions on their own. For private lenders, they’re usually most useful for triage, data reconciliation, flagging exceptions, and producing audit-ready handoffs.

CRE underwriting falls apart fast when the inputs are wrong. One stale rent roll, one misread trailing-12, or one missing insurance item can skew debt yield, debt service coverage ratio, and loan-to-value before a credit memo ever gets to committee. AI agents for CRE underwriting are useful when they pull together borrower, property, and market data, flag gaps, and route exceptions for review. They are not a substitute for credit judgment.

This article covers where underwriting agents fit in a private lending workflow, what they can automate reliably, where the control boundary should sit, and which governance measures matter in 2026. It also draws a hard line between decision support and automated approval. That line matters more now that regulators are paying closer attention to model risk, fair lending, data governance, and adverse action practices in credit decisions.

Key Takeaways

- AI agents for CRE underwriting are best at data assembly, reconciliation, and exception routing. Final credit decisions should stay with designated underwriters or credit officers.

- According to Federal Reserve Supervisory Guidance on Model Risk Management SR 11-7, any model that influences decisions should be validated, controlled in use, and monitored over time.

- According to the Consumer Financial Protection Bureau circular on adverse action notices and complex algorithms, creditors cannot dodge notice obligations by relying on opaque algorithmic systems.

- Private lenders get the most value from underwriting agents in incomplete file detection, cross-document variance checks, covenant and policy exception tagging, and memo prep support.

- A controlled rollout usually starts with analyst-side recommendations, confidence thresholds, and audit logs before any scoring output is allowed to influence approval routing.

AI agents for CRE underwriting work best as decision support, not automated credit approval

An underwriting agent is easiest to defend when it prepares and structures a file for human review. In practice, that means pulling data from application forms, bank statements, leases, rent rolls, third-party reports, and market data providers, then showing the assigned analyst what is missing and what may break policy.

That distinction matters because U.S. regulators have been clear: institutions are still responsible for model outputs. According to the Federal Reserve's SR 11-7 guidance, model risk comes from both wrong outputs and misuse of correct ones. According to the Office of the Comptroller of the Currency guidance on model risk management, banks should maintain sound governance over development, implementation, validation, and change management. Private debt funds and nonbank lenders are not supervised the same way, but the basic control logic does not change if model outputs affect credit decisions, committee materials, pricing, or decline rationale.

For a broader workflow view, see ai agents for private commercial real estate lending. This page stays focused on the underwriting layer: file assembly, missing-item detection, exception analysis, and handoff controls.

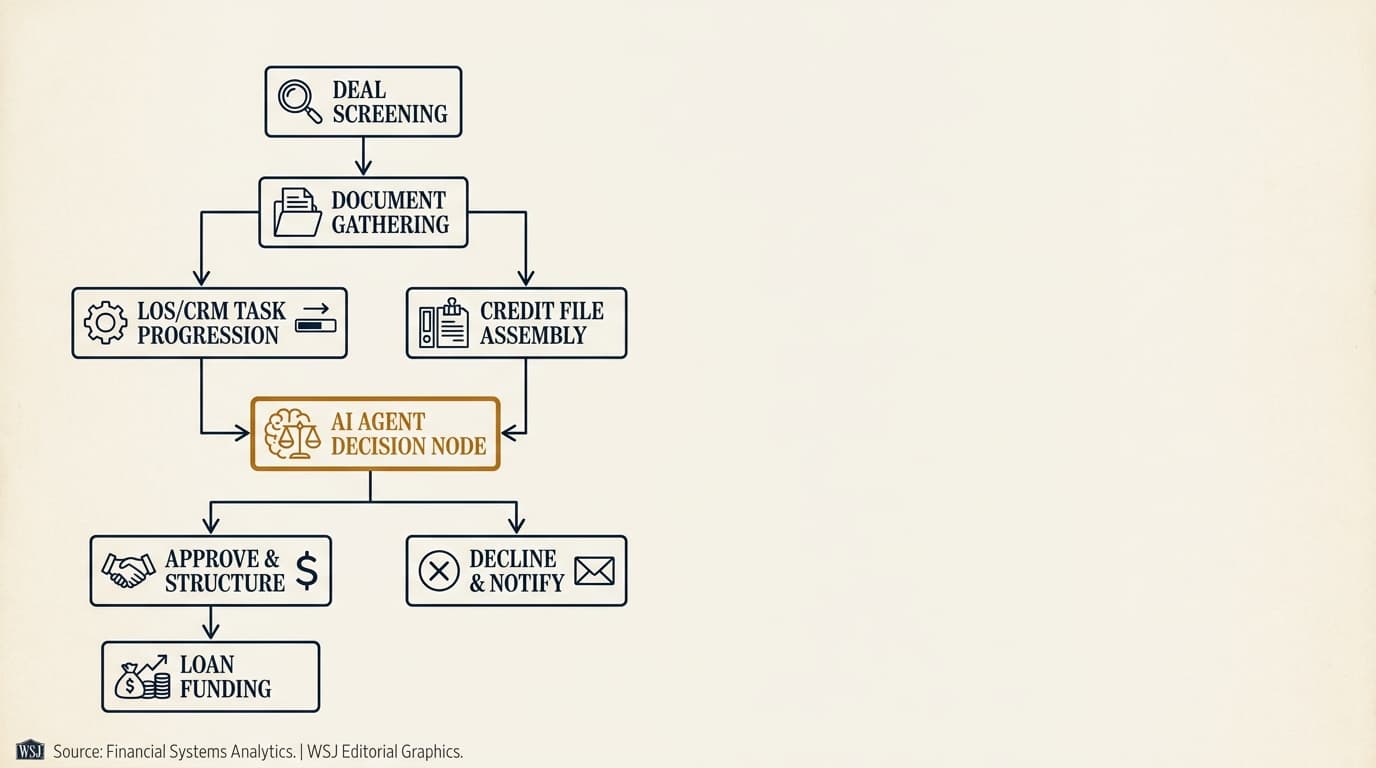

What AI agents actually do in a CRE underwriting workflow

The best underwriting agents handle bounded tasks with traceable inputs. They do not form an autonomous credit opinion the way a human underwriter does. They run repeatable workflows that analysts already do by hand.

Document and data orchestration starts with a complete source map

An underwriting agent should start by identifying which documents and data feeds are present, current, and relevant to the deal. That includes borrower financial statements, entity organizational documents, schedules of real estate owned, rent rolls, operating statements, appraisals, title materials, insurance certificates, and market comparables.

In stronger implementations, the agent creates a source register that shows each metric, its source document, the as-of date, and whether the field was extracted directly, inferred, or entered by a user. That source trace lets an underwriter challenge a debt service coverage ratio or occupancy figure without rechecking the entire file from scratch.

Cross-document reconciliation is where much of the labor savings shows up

Underwriting files often contain conflicting numbers because documents were prepared on different dates or for different purposes. An agent can compare net rentable area across the appraisal, rent roll, and borrower package, compare borrower liquidity figures against bank statements, or catch when trailing-12 revenue does not line up with annualized in-place rent.

Those checks are especially useful when paired with specialized extraction workflows such as ai agents for cre document analysis. The underwriting agent does not need to parse every document again if an earlier extraction layer has already normalized leases, rent rolls, and operating statements.

Pre-memo structuring reduces repetitive drafting, not review responsibility

An agent can draft a first-pass credit memo outline populated with property facts, sponsor background, key ratios, and exception summaries. The reviewer still needs to verify narrative conclusions, policy references, and mitigating factors before anything goes to committee.

That use case is narrower than ai agents for cre loan origination, which covers broader workflow automation across intake, handoffs, and pipeline management. In underwriting, the job is analytical prep and exception visibility.

Borrower, property, and market data: what the agent assembles

A useful underwriting package pulls together three things: borrower strength, collateral performance, and market context. The agent's job is to assemble them into a comparable structure, tag missing support, and keep a clear record of where every number came from.

Borrower data assembly should emphasize liquidity, leverage, and track record

For the borrower and guarantor, the agent typically collects global cash, marketable securities, contingent liabilities, recourse exposure, schedule-of-real-estate-owned performance, bankruptcy history, and entity structure. It can also summarize sponsor experience by asset type, geography, and business plan stage, as long as the source data is documented.

According to the Securities and Exchange Commission risk factor disclosures commonly used in commercial mortgage securitization filings, borrower credit quality, sponsor concentration, and property cash flow deterioration remain core drivers of credit performance. A private lender using an underwriting agent should focus there first, before getting distracted by weaker peripheral signals.

Property data assembly should show both current performance and stabilization assumptions

At the property level, the agent should assemble occupancy, in-place rent, lease rollover schedule, tenant concentration, trailing operating expenses, capital expenditure needs, deferred maintenance indicators, insurance coverage, and third-party valuation metrics. It should also separate actual historical data from pro forma assumptions.

According to the Appraisal Foundation's Uniform Standards of Professional Appraisal Practice, appraisal conclusions depend on stated assumptions, extraordinary assumptions, and limiting conditions. If an appraisal value depends on future lease-up or renovation milestones, the underwriting agent should present that dependency as a condition, not a settled fact.

Market data assembly is most useful when it frames risk, not when it pretends to replace local knowledge

Market data can include vacancy, absorption, asking rent trends, cap rate movement, sales comparables, permit activity, employment exposure, and concentration by industry. The agent should label whether the data came from a licensed third-party source, a public record, or borrower-provided support.

According to the U.S. Bureau of Labor Statistics regional price and market data releases and local public record sources, conditions can diverge sharply by metro and submarket. A suburban infill industrial asset in Northern New Jersey and a small-bay industrial property in the Inland Empire may both show strong occupancy, but rollover risk, replacement supply, and tenant-credit patterns can differ enough to change exit assumptions. An agent can summarize those signals. It cannot replace market judgment from originators and underwriters who actually know the submarket.

How AI agents surface missing items and inconsistent inputs

Missing-item detection is one of the highest-return uses of AI in underwriting because it keeps analysts from wasting hours on files that are not decision-ready. A good agent does more than say a document is missing. It shows which downstream calculations are blocked and which assumptions would otherwise rest on thin air.

| Missing or inconsistent item | What the agent flags | Why it matters in underwriting |

|---|---|---|

| Stale rent roll | Rent roll date predates T-12 period or application submission | Occupancy, weighted average remaining lease term, and in-place rent may be unreliable |

| Borrower liquidity unsupported | Personal financial statement does not reconcile to recent account statements | Guarantor support and recourse strength may be overstated |

| Square footage mismatch | Appraisal GLA differs from rent roll or offering memorandum | LTV, per-square-foot value, and occupancy calculations may be distorted |

| Insurance gap | Coverage certificate missing, expired, or below policy minimum | Collateral protection and closing conditions are incomplete |

| Tax arrears or lien ambiguity | Public record status conflicts with borrower disclosure | Priority, closing funds, and title requirements may change |

| Incomplete entity chain | Ownership structure lacks formation documents or signatory proof | Authority, recourse, and compliance review cannot be completed |

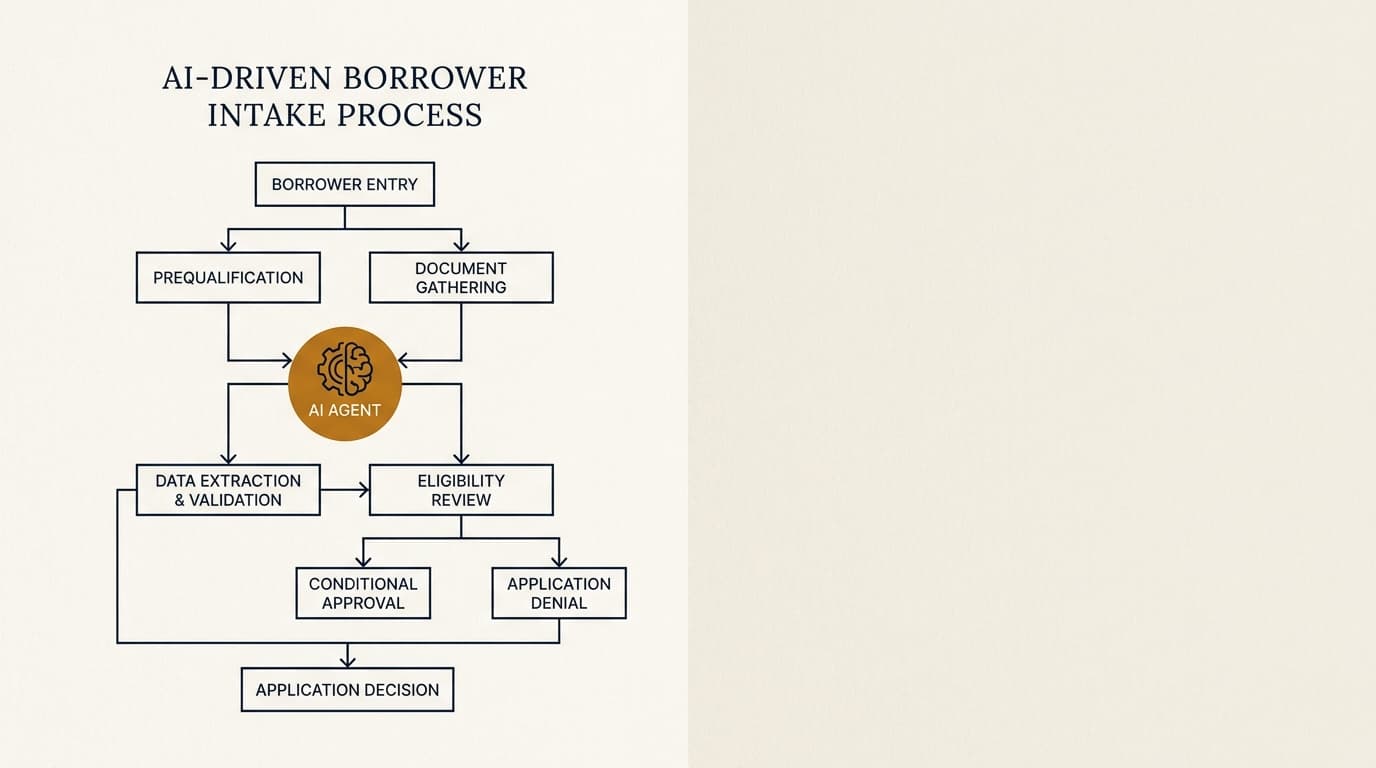

The practical benefit is queue management. Instead of assigning an analyst to discover halfway through that a file lacks the current operating statement, the agent can mark the package as incomplete and request the exact item needed. That sits next to, but is different from, ai agents for borrower intake, which operates earlier in the process and focuses on collecting and validating submissions.

For quality control, the agent should also assign confidence labels. A missing-item alert based on a clearly absent file is not the same as an inferred inconsistency based on OCR ambiguity or odd borrower formatting. Those should not be treated the same way operationally.

How AI agents identify risk exceptions before committee review

Exception detection is where underwriting agents start to affect risk outcomes. They can compare deal inputs against lender policy thresholds, historical precedent, and transaction-specific conditions, then route the file for elevated review when needed.

Policy exception checks should be explicit and rules-based

Common examples include debt service coverage ratio below minimum, loan-to-value above program limits, tenant concentration above tolerance, cash-out proceeds beyond policy, unsupported interest reserve sizing, or guarantor liquidity below the required multiple of recourse exposure. The agent should cite the exact policy rule that was triggered and the input values behind it.

That is especially useful when paired with dedicated analytics on leverage metrics such as ai agents for dscr and ltv analysis. In practice, lenders get cleaner exception reporting when ratio computation, source tagging, and policy threshold comparison are split into separate modules.

Pattern-based risk signals can help, but they need tighter controls

Some lenders also want the agent to identify softer signals: heavy near-term lease rollover, declining tenant sales in retail, repeated sponsor extension requests, unusual reserve draws, or appraisal assumptions that look aggressive relative to local market evidence. Those signals can be useful, but they are closer to model behavior than simple rule checks, so they need stronger validation and better reviewer training.

According to the National Institute of Standards and Technology AI Risk Management Framework, organizations should govern AI systems with validity, reliability, accountability, transparency, privacy, safety, and fairness in mind. In underwriting, that means documenting what a risk flag means, how it was generated, and what the reviewer is supposed to do with it.

A practical exception framework separates data issues, policy breaches, and judgment calls

Private lenders usually get better results when they classify exceptions into three buckets:

- Data exceptions: missing, stale, or conflicting inputs that block reliable analysis.

- Policy exceptions: measurable breaches of lender thresholds, such as LTV, DSCR, liquidity, concentration, or reserve requirements.

- Judgment exceptions: issues that need narrative assessment, such as sponsor credibility, market softness, lease-up execution risk, or exit uncertainty.

That structure works because only the first two categories are well suited to consistent machine detection. The third can be supported by AI summaries, but not decided by them.

Decision support vs automated approval: the control boundary that matters

The operational line is simple: a decision-support agent prepares, prioritizes, and explains; an automated approval system determines the outcome or materially narrows it without meaningful human review. Many lenders blur that line by accident when an agent-generated risk score starts driving declines, pricing, or committee access.

That distinction carries legal and governance consequences. According to the Consumer Financial Protection Bureau's 2022 circular on adverse action notifications, creditors using complex algorithms still have to provide specific and accurate reasons for adverse action. A private CRE lender may not always fall under the same product scope as consumer credit, but the control lesson holds: if a system affects disposition, the lender needs a rationale a human can understand and records showing how the outcome was reached.

According to the Federal Deposit Insurance Corporation statement on managing risks in AI and related technologies, governance, third-party risk management, data quality, and oversight remain central concerns. Even for nonbanks, those are the first areas investors, warehouse lenders, auditors, and litigation counsel are likely to examine after a disputed decline or a loss.

A defensible boundary for private lenders usually includes these rules:

- The agent may recommend missing items, risk flags, and memo language.

- The agent may calculate ratios only from disclosed inputs with a visible formula and source path.

- The agent may not issue an approval, decline, or pricing decision.

- The agent may not suppress committee escalation for exceptions without human signoff.

- The agent's outputs must be editable, reviewable, and logged.

A practical control framework for private lenders

Private lenders do not need a bank-scale model governance program to use underwriting agents, but they do need a documented control structure. The strongest setups treat the agent like a governed production system, not a handy productivity plugin.

Minimum controls include source traceability, thresholds, and override logs

At a minimum, every extracted or calculated field should have a source reference, date stamp, and confidence indicator. The system should also record who reviewed each flagged item, whether they accepted or overrode it, and why.

That level of auditability is similar in spirit to the controls discussed in ai agents for cre lending compliance, though underwriting needs more emphasis on analytical lineage and policy mapping.

Validation testing should focus on the errors that change credit outcomes

Before rollout, lenders should test how often the agent misses missing documents, misclassifies exceptions, or carries stale values forward from prior versions. The key metric is not average extraction accuracy in the abstract. It is decision-impact error rate. A system that is 95% accurate on low-value fields but shaky on lease rollover dates or guarantor liquidity can still create real underwriting risk.

| Control area | What to document | Failure if absent |

|---|---|---|

| Source lineage | Document name, page, field, as-of date, extraction method | Analysts cannot verify or challenge outputs efficiently |

| Policy mapping | Threshold table by product, geography, and exception authority | Agent flags become inconsistent across programs |

| Human review | Named reviewer, timestamp, disposition, rationale | Outputs drift into de facto auto-decisioning |

| Testing | Benchmarked files, exception recall, false positive rate | Management lacks evidence the system is fit for use |

| Change management | Model/version release notes and rollback procedures | Silent changes alter underwriting behavior without notice |

| Vendor oversight | Security, subcontractors, retention, training-data terms | Confidential data and reliability risks remain unmanaged |

Edge cases are where weak underwriting agents fail first

Mixed-use assets, fractured condo structures, ground leases, hotels, transitional collateral, and sponsor recourse carveout issues routinely break simplistic workflows. A lender should assume that edge cases will need either manual handling or a separate ruleset. If the system was trained mostly on stabilized multifamily and industrial files, management should not let it drift quietly into hospitality or construction-heavy bridge deals.

That issue is even sharper for shorter-duration or transitional products. Lenders focused on those transactions may also need the more specific workflow discussed in ai agents for bridge loans.

Where AI underwriting helps most by loan type and lender strategy

AI underwriting creates the clearest value where lenders deal with high document volume, repeated policy checks, and a meaningful share of incomplete or inconsistent files. It helps less when every deal is highly bespoke and committee judgment turns more on negotiated structure than standardized metrics.

| Lender profile | High-value underwriting use case | Lower-value use case |

|---|---|---|

| Bridge lender | Tracking missing diligence items, reserve assumptions, and extension triggers | Fully automated sponsor quality scoring |

| Stabilized multifamily lender | Rent roll/T-12 reconciliation, ratio calculation, insurance and tax checks | Narrative market thesis generation without local review |

| Small-balance lender | File triage, standardized exception tagging, memo drafting | Bespoke structure recommendation for unusual collateral |

| Debt fund with varied collateral | Centralizing source data and committee package preparation | Cross-asset automated approval logic |

The first real win usually is not faster credit approval in some vague sense. It is fewer analyst hours spent assembling facts, chasing missing items, and checking whether a policy breach has already happened. Lenders that push for auto-approval too early usually buy themselves governance trouble before they get meaningful efficiency.

Implementation sequence for private lenders

A staged rollout reduces both operational and governance risk. The sequence below tends to hold up better than launching one broad model and asking the credit team to work around it.

- Define the underwriting tasks the agent may perform, such as source collection, field extraction, reconciliation, and exception tagging.

- Map each task to authoritative inputs, required review steps, and prohibited actions.

- Build a source-of-truth register showing where every ratio input comes from and how stale-data rules work.

- Test the agent on a benchmark set of approved, declined, and withdrawn files across multiple asset types.

- Measure decision-impact errors, including missed exceptions, false positives, and stale-field carryover.

- Set confidence thresholds that route uncertain outputs to manual review instead of silent acceptance.

- Require human signoff before any agent output enters a credit memo, committee package, or decline rationale.

- Monitor overrides, recurring extraction failures, and version changes after deployment.

For lenders building a broader operating model around these systems, the pillar page on ai agents for private commercial real estate lending covers portfolio-wide workflow, ROI, and risk issues beyond underwriting.

AI agents for CRE underwriting are useful when they make credit work more reviewable

AI agents for CRE underwriting should cut manual assembly work, expose missing support, and make policy exceptions easier to spot. They become risky when lenders let recommendation systems drift into opaque credit decisioning without source traceability, testing, and named human accountability.

For private lenders, the most durable design is narrow and evidence-heavy: visible inputs, explicit rules, confidence thresholds, override logs, and a hard separation between analytical support and final credit authority. That gets you efficiency without muddying who made the decision or why.

Frequently Asked Questions

What do AI agents for CRE underwriting actually automate?

They typically automate data gathering, document classification, field extraction, cross-document checks, missing-item alerts, ratio preparation, and exception tagging. In a controlled setup, they do not issue final approvals or declines. The highest-value tasks are usually rent roll and operating statement reconciliation, stale-document detection, and preparation of an audit-ready underwriting file.

Can a private lender use AI to approve CRE loans automatically?

A private lender can technically configure a system that routes or filters files automatically, but that is not the same thing as a defensible credit approval process. If AI outputs materially affect disposition, pricing, or escalation, the lender needs documented rationale, human review, testing, and controls over model changes. In practice, most lenders are better served by decision support than automated approval.

Which CRE asset types benefit most from underwriting agents?

Stabilized multifamily, industrial, and other assets with fairly standardized reporting usually benefit first because the documents and policy checks are more repeatable. Transitional hospitality, mixed-use, construction-heavy, and ground-lease structures need more manual handling because the underwriting logic is less standardized and exceptions are more common.

How should lenders handle regional market variation in AI underwriting?

Regional variation should be handled through market-specific assumptions, data-source labeling, and local reviewer oversight. A debt yield or exit cap assumption that looks conservative in Dallas may be aggressive in San Francisco, and tenant rollover risk in South Florida office is not interchangeable with suburban Midwest office. The agent can assemble metro-level indicators, but local credit staff should validate the market read.

What controls matter most before deploying AI agents for CRE underwriting in 2026?

The core controls are source traceability, policy-threshold mapping, benchmark testing, confidence-based escalation, human signoff, override logging, vendor oversight, and version control. If a lender cannot show where a number came from, which policy rule was triggered, who reviewed it, and what changed between software versions, the deployment is not ready for production underwriting.