AI Agents for CRE Loan Origination: What to Automate

Commercial real estate loan origination still bogs down at the front end: inquiry triage, borrower follow-up, application intake, and broker updates often get split across inboxes, spreadsheets, and loose handoffs. This article looks at where AI agents can handle CRE origination work reliably, where credit teams still need to stay involved, and how lenders can build audit-ready controls around both.

Commercial real estate lenders still burn origination time on work that has nothing to do with actual credit judgment: missed inbox inquiries, half-complete application packages, manual broker updates, and slow internal handoffs. AI agents for CRE loan origination work best in that front-end layer, where the tasks are rule-based, high-volume, and easy to measure. This article breaks down what to automate, what to keep with human credit teams, and how private lenders should set controls, audit trails, and escalation rules around both.

McKinsey research on generative AI and work activities puts customer service, data collection, and routine administrative work near the top of the list for automation potential. In lending operations, that maps pretty directly to inquiry intake, document follow-up, workflow routing, and borrower communication. But Consumer Financial Protection Bureau guidance on consumer finance automation oversight makes the other half of the point: institutions still own the outcome, even when software is involved. For CRE lenders in 2026, the practical answer is simple. Automate repeatable intake and coordination work. Keep credit judgment, exception handling, and final approvals with trained staff.

Key Takeaways

- AI agents for CRE loan origination are strongest at inquiry capture, lead triage, application completeness checks, broker updates, and internal task routing.

- Human teams should keep control of credit judgment, structure decisions, policy exceptions, fraud concerns, and any file with conflicting or missing facts.

- The National Institute of Standards and Technology AI Risk Management Framework calls for documented roles, monitoring, and human oversight for higher-risk decisions.

- The best origination setups separate operational automation from underwriting authority and leave a clear audit trail for every message, task, escalation, and override.

AI agents for CRE loan origination work best on repetitive front-end operations

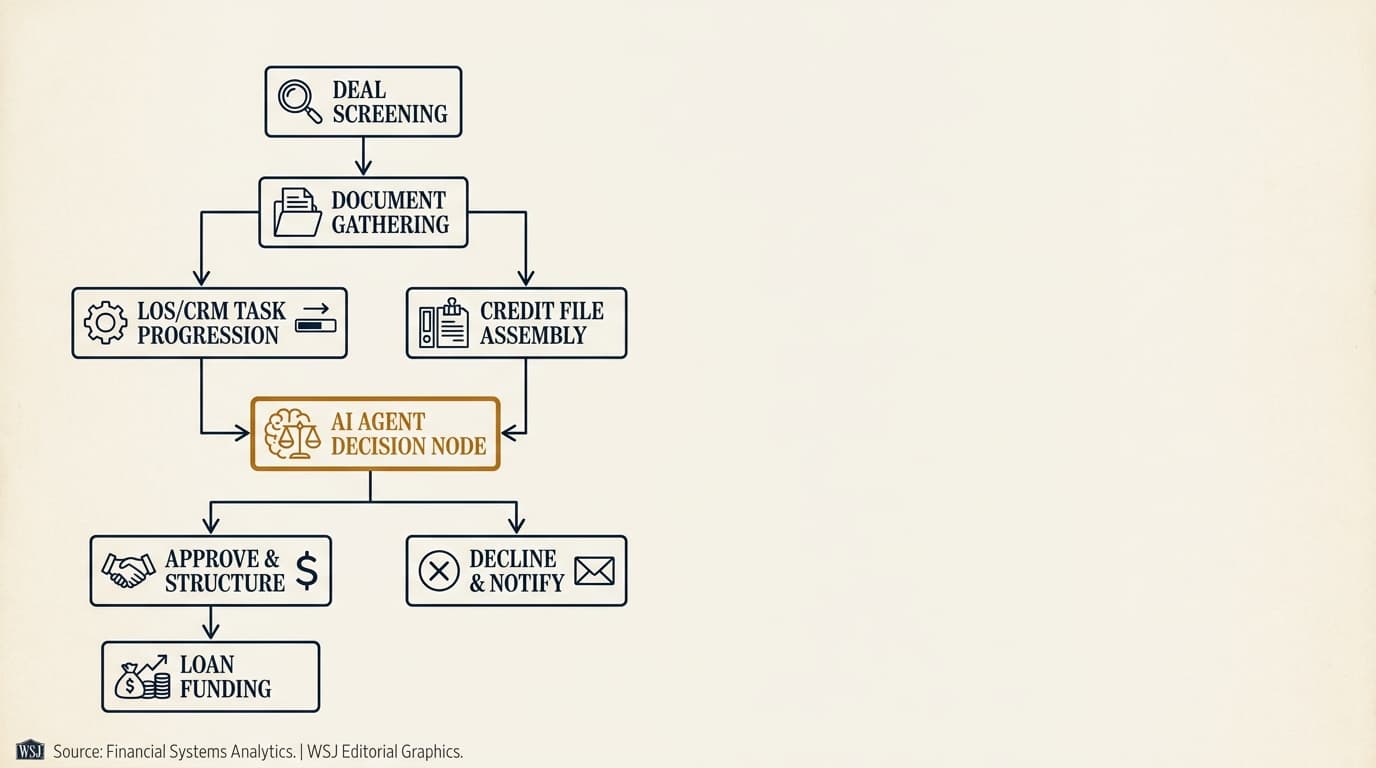

AI agents for CRE loan origination are software agents that watch intake channels, apply pre-set business rules, trigger next steps, and record actions across the origination workflow. They are most useful before formal underwriting starts, when the real constraint is not credit analysis but operational throughput.

Most private CRE lenders run into the same front-end bottlenecks: web form submissions dropping into a shared inbox, brokers emailing partial packages, analysts retyping sponsor and property details into a CRM, and origination managers chasing status updates across teams. The Mortgage Bankers Association commercial and multifamily finance research hub shows how CRE lending volume and staffing conditions move with rate cycles and transaction activity. That makes rigid manual workflows hard to scale, whether the market is hot or dead quiet.

That is the lane where AI agents can help without crossing into underwriting authority. An origination agent can read an inquiry, classify the asset type, compare stated leverage to policy thresholds, request missing documents, create tasks in the loan origination system, and notify the right person when a file hits an escalation rule. It should not decide whether weak guarantor liquidity is acceptable, whether tertiary-market retail deserves an exception, or whether a sponsor explanation really clears up an occupancy issue. Those are human calls.

For a broader operating model across the lending lifecycle, see ai agents for private commercial real estate lending.

What AI agents can handle in CRE loan origination

The safest automation targets in origination are standardized interactions, predictable handoffs, and administrative checks. The weakest targets are open-ended judgment calls made with incomplete facts and real downside if the system gets them wrong.

| Origination task | Good fit for AI agent | Human review required |

|---|---|---|

| Inbound inquiry capture | Yes — monitor email, forms, and CRM entries; classify source and loan type | Review only if inquiry is ambiguous or high-value |

| Lead qualification | Yes — compare stated loan request to screening rules | Required for edge cases, exceptions, and nuanced sponsor situations |

| Application intake | Yes — collect documents, check completeness, extract structured fields | Required when documents conflict or key fields are unreadable |

| Broker updates | Yes — send status messages based on workflow events and SLAs | Required for pricing discussions, declines, and negotiated terms |

| Task routing | Yes — assign analysts, queue files, track deadlines | Required when workload, expertise, or politics require manual override |

| Credit decisioning | No — not as a standalone authority | Always |

| Policy exceptions | No — flag and summarize only | Always |

Federal Reserve guidance on model risk management draws a line between tools that inform decisions and processes that actually make them. For private CRE lenders, that distinction is not academic. If an agent is scoring, prioritizing, or recommending actions, the lender still needs clear documentation of inputs, rules, overrides, and who reviewed what.

Inquiry capture and lead routing

Inquiry capture is one of the cleanest automation opportunities in origination because the work is repetitive and time-sensitive. An AI agent can ingest inbound emails, website submissions, broker portal messages, and referral notes, then normalize them into one intake queue.

In practice, the agent should tag each inquiry with at least six fields from day one: source, requested loan amount, asset type, property location, timing, and whether a rent roll, trailing 12-month operating statement, or borrower summary was attached. If the source data is incomplete, the agent can send a standard follow-up request within minutes instead of waiting for a coordinator to get to the inbox. The U.S. Small Business Administration Lender Match program documentation makes a similar point: structured intake improves routing by collecting consistent information upfront. Different product, same operational lesson.

A practical routing model usually looks like this:

- Capture the inquiry from every approved intake channel.

- Classify the request by source, loan type, asset type, and geography.

- Check the submission against minimum intake fields.

- Request missing items automatically using approved templates.

- Route the file to the correct origination queue based on rules.

- Escalate high-value or time-sensitive submissions to a human originator.

This is also where lenders run into channel inconsistency fast. Brokers email narrative summaries that do not line up with form fields. Borrowers submit a property address but no borrowing entity name. Referral partners forward scraps from a phone call. A capable origination agent needs fallback logic for all of that, plus a hard rule against inventing missing facts.

What good routing rules look like

Routing rules should be explicit enough to audit and narrow enough to avoid hidden decision-making. They should direct work, not make credit calls.

Examples include assigning multifamily bridge requests above a lender-defined size threshold to a senior originator, sending industrial refinance requests in approved states to a regional queue, or flagging hospitality deals for manual triage regardless of leverage because that asset class usually needs more context. The upside is consistency. The risk is that unofficial policy gets baked into routing logic. If a rule changes how a deal is treated in a meaningful way, it belongs in formal policy or at least a documented operating procedure.

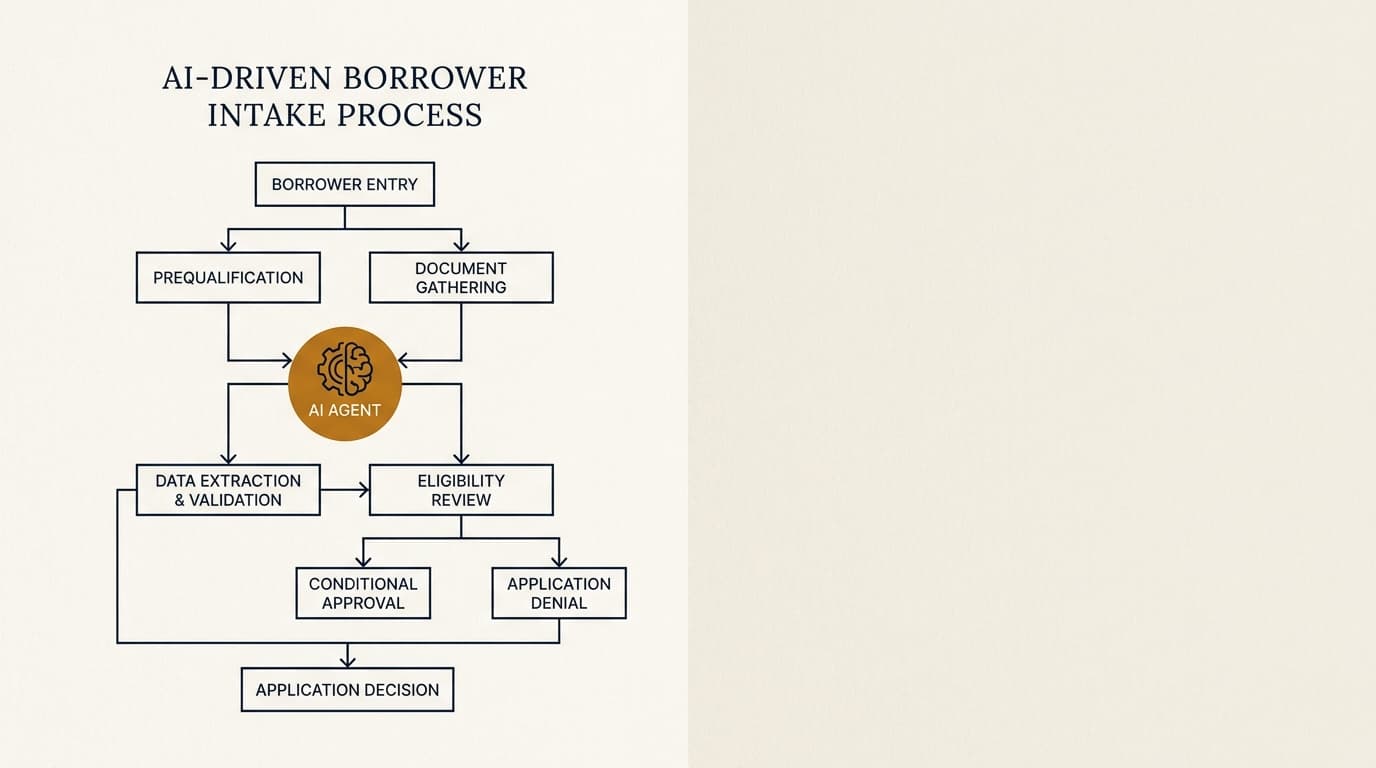

Lead qualification and pre-screening

Lead qualification is a good fit for AI support when the lender already has a written credit box. Without documented screening rules, automation just makes inconsistency faster.

An origination agent can compare stated deal attributes against pre-screen criteria such as minimum and maximum loan size, asset class focus, geographic limits, requested leverage bands, occupancy floor, and required sponsor experience. It can then sort leads into three buckets: within box, outside box, or needs review. That middle bucket matters more than it sounds. Without it, you get false precision.

Consider three examples:

- Clearly within box: $8 million multifamily refinance, 68% stated loan-to-value, stabilized occupancy, experienced sponsor, approved market.

- Clearly outside box: $1.2 million owner-user request when the platform does not lend on owner-occupied properties.

- Requires review: 74% leverage request on a partially stabilized industrial asset with a strong repeat sponsor in a market the lender knows well.

The third case comes up all the time in private CRE lending. A rigid automation rule may kick out a file that a credit team would absolutely want to discuss. That is why AI-assisted pre-screening works best as queue management and recommendation support, not autonomous decline authority.

For lenders building more formal downstream credit workflows, related topics are covered in ai agents for cre underwriting and cre loan origination workflow ai agent.

Application intake and completeness review

Application intake is where origination teams often lose the most time, because small delays stack up. A missing schedule of real estate owned or an outdated rent roll can leave a file sitting for days before anyone notices.

An AI agent can enforce a lender's intake checklist, compare received documents against required items, and send targeted follow-up requests. The real job is not just collecting files. It is deciding whether the package is complete enough for the next stage. The Fannie Mae Multifamily Selling and Servicing Guide reflects the same operating logic: documentation standards exist because intake quality affects credit review and processing integrity. Private lenders may use different checklists, but the principle holds.

A strong origination intake agent should be able to:

- Match incoming attachments to document categories.

- Identify stale documents based on lender-defined age thresholds.

- Detect missing signatures or blank mandatory fields in application forms.

- Extract core data into the CRM or loan origination system.

- Generate a deficiency list with source references.

- Pause advancement until required items are confirmed.

The edge cases still need human review. If the rent roll date conflicts with the borrower summary, if borrower entity names differ across organizational documents, or if a trailing 12-month statement looks internally inconsistent, the file should move to manual review. Do not force the agent to guess. More advanced extraction and validation issues are better addressed in ai agents for cre document analysis.

A useful completeness standard for private lenders

Many lenders treat intake as complete once documents have arrived. That bar is too low. The more useful question is whether the file is complete enough for the next decision point.

For example, if the next step is an initial quote review, the minimum package may be a borrower summary, property address, rent roll, historical operating figures, requested loan terms, and ownership structure. If the next step is full underwriting, the threshold is much higher. Separate intake standards by stage keep teams from over-collecting too early and under-collecting when speed matters.

Broker communication and status updates

Broker communication is expensive operationally because it is frequent, repetitive, and tied to deadlines. It is also one of the fastest ways to damage a relationship if the message is wrong or inconsistent.

An origination agent can send acknowledgments, missing-item requests, receipt confirmations, stage updates, and reminders tied to actual workflow events. That is useful because brokers usually want three things at the front end: confirmation that the request came in, clarity on what is missing, and a clear next step. An agent can handle all of that as long as the message is based on system facts rather than guesses.

The boundaries here should be strict. AI can send: "We received the package at 10:42 a.m.; the current deficiency list includes an updated rent roll and sponsor liquidity statement." AI should not send: "The deal looks good," "pricing should be competitive," or "approval is likely." Those are interpretive statements with credit and commercial consequences.

This matters for auditability. The National Institute of Standards and Technology Privacy Framework calls for systems that support appropriate data governance and traceability. In plain CRE lending terms, every outbound broker message generated by an agent should be logged with a timestamp, trigger event, data source, template version, and any human edits.

Task routing, SLA management, and queue control

Origination delays usually come from handoffs, not analysis. A file sits because nobody was assigned, a deadline was missed, or the next team assumed somebody else owned the task.

An AI agent can reduce those failures by creating tasks automatically, assigning owners by queue rules, monitoring service-level targets, and escalating stalled items. This is less visible than borrower-facing automation, but in many shops it matters more because it cuts silent delay.

| Workflow point | Typical manual failure | Agent control |

|---|---|---|

| New inquiry received | No owner assigned for several hours | Create owner task immediately and notify queue |

| Deficiency request sent | No follow-up after 48 hours | Trigger reminder and escalate after SLA breach |

| Application deemed complete | Analyst assignment delayed | Route to underwriting prep queue automatically |

| Broker asks for status | Originator manually checks multiple systems | Surface current stage and pending items from workflow log |

A useful design rule is this: the agent should manage deadlines and visibility, while managers still control priorities. If a senior originator wants to reassign a politically sensitive relationship or fast-track a repeat sponsor, that override should be easy and recorded. Hidden workarounds usually mean the routing rules do not match how the business actually runs.

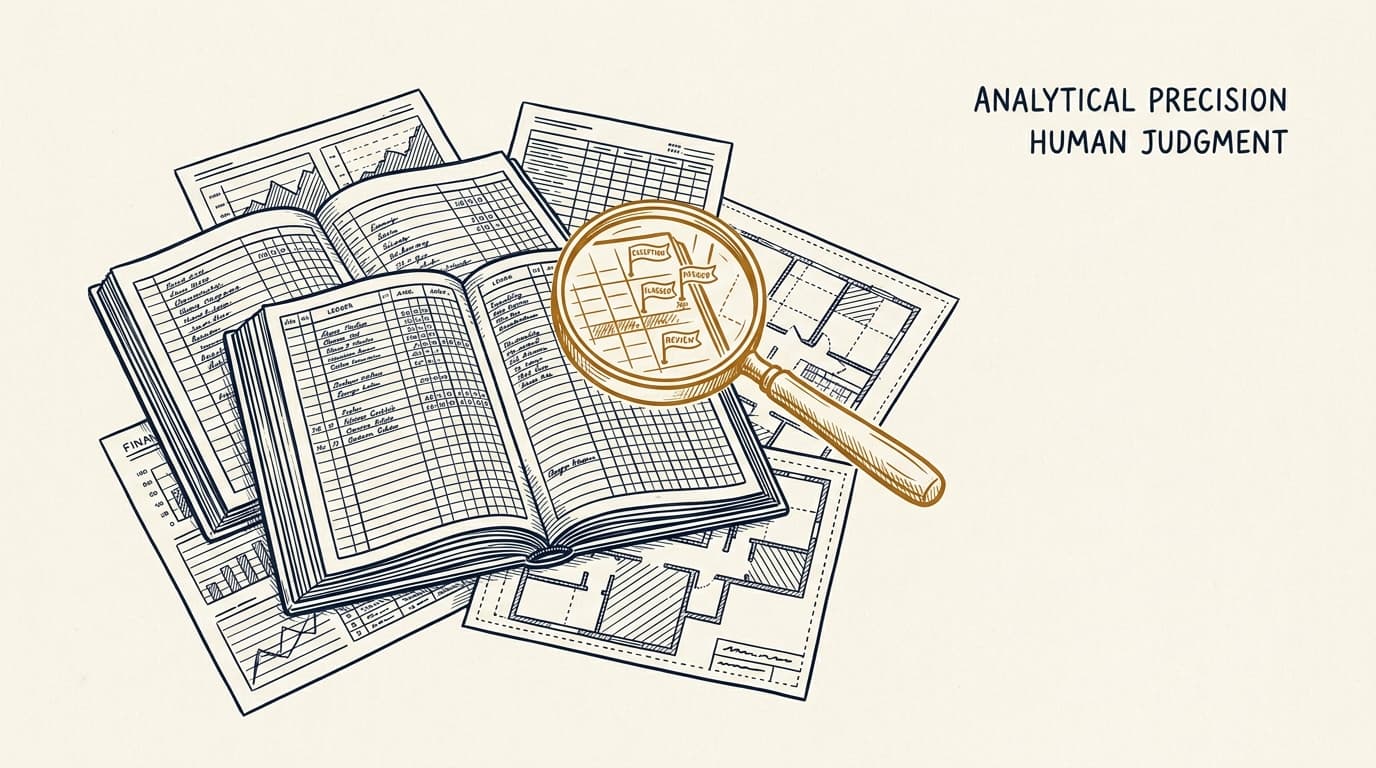

Where human credit teams should stay in the loop

Human review should stay mandatory whenever a file moves from operational processing into credit judgment, policy interpretation, or exception management. That line is what keeps origination automation useful instead of reckless.

At a minimum, human teams should review:

- Any file flagged for fraud, inconsistency, or unverifiable data.

- Any pre-screen result based on incomplete borrower or property information.

- Any request outside written policy or within an exception band.

- Any communication involving quoted terms, decline rationale, or structure changes.

- Any materially adverse decision or sensitive relationship issue.

The OECD AI Principles call for human oversight that matches the context and potential impact. In private CRE origination, that oversight should be built into the workflow itself. It cannot depend on whoever happens to notice a problem first.

A simple rule for escalation design

If the next action would change the lender's risk position, pricing posture, or legal exposure, a human should approve it. If the next action simply moves administration forward under documented rules, an agent can usually handle it.

That rule is easy to operationalize. Requesting a missing guarantor financial statement is administrative. Deciding weak liquidity is still acceptable is not. Routing a file to hospitality review is administrative. Approving an exception because the sponsor has strong recourse support is not.

A practical decision framework for origination automation

The best way to decide what to automate is to score each task on four variables: volume, rule clarity, error tolerance, and downside if the system gets it wrong. That is more useful than a standard vendor checklist because it forces lenders to weigh efficiency against actual risk.

| Task | Volume | Rule clarity | Error tolerance | Automation fit |

|---|---|---|---|---|

| Receipt acknowledgment | High | High | High | Very high |

| Missing document reminders | High | High | Moderate | High |

| Lead pre-screening | High | Moderate | Moderate | Moderate to high with review queue |

| Analyst assignment | Moderate | High | High | High |

| Quoted term communication | Moderate | Low | Low | Low |

| Policy exception handling | Low | Low | Low | Very low |

In practice, lenders that start with acknowledgments, deficiency tracking, and queue routing usually get faster adoption than lenders that start with automated screening decisions. The first group is easy to measure and rarely controversial. The second can create internal resistance fast, especially if originators think the system is quietly filtering relationships before a human ever sees them.

Implementation steps for private lenders

Implementation goes better when lenders map the current front-end process in operational detail before adding automation. Most failures start earlier than people want to admit. They come from automating a workflow that was never clearly defined in the first place.

- Map every intake channel, queue, and handoff in the current origination process.

- Define the minimum data fields required for inquiry capture and pre-screening.

- Document written rules for routing, deficiency notices, and escalation triggers.

- Separate operational automation from credit judgment authority in policy and system design.

- Configure audit logs for every inbound event, outbound message, task assignment, and override.

- Pilot the agent on one asset class or channel before expanding lender-wide.

- Measure response time, package completeness, manual touches, and override rates.

- Review error cases monthly and adjust rules, templates, and escalation thresholds.

This order matters because it gives the lender evidence instead of opinion. If the pilot cuts average first-response time from hours to minutes but also increases false escalations, the team can tune the rules before rolling it out further. That is not a failure. That is exactly what governance is supposed to catch.

For adjacent intake design questions, see ai agents for borrower intake.

Common failure points in AI-assisted CRE origination

Most origination automation failures are process failures before they are model failures. The system does what the workflow tells it to do, but the workflow itself is incomplete, inconsistent, or politically unrealistic.

The most common problems are:

- Undocumented credit box: the agent applies informal rules that different originators interpret differently.

- Over-automation of edge cases: the system treats ambiguous files like simple files.

- Weak source controls: CRM, inbox, and document system data do not match.

- Poor communication governance: templates imply credit opinions instead of process facts.

- No override logging: staff bypass the workflow without leaving an audit trail.

The OWASP Top 10 for Large Language Model Applications points to the need for controls around prompt handling, data access, output validation, and misuse. For CRE origination teams, that translates into narrower permissions, template-based outbound communication, approved data sources only, and mandatory human review for any action with pricing, legal, or credit significance.

Frequently Asked Questions

What are AI agents for CRE loan origination?

AI agents for CRE loan origination are workflow tools that handle front-end lending tasks such as inquiry capture, preliminary lead triage, application completeness checks, broker updates, and internal task routing. They work best on repeatable operational steps and should not replace human credit decisions.

Which origination tasks should stay with human lenders?

Credit judgment, policy exceptions, decline decisions, pricing discussions, structure changes, and any case involving conflicting facts should stay with human teams. Those actions change risk, legal exposure, or borrower treatment in ways that require clear human accountability.

Can AI agents decline CRE loan requests automatically?

Some lenders may use automated screening to identify out-of-box submissions, but automatic declines create governance and relationship risk when source data is incomplete or policy logic is too rigid. In most private CRE settings, the safer design is to route clearly ineligible requests for quick human confirmation instead of full autonomous decline.

How do regional differences affect CRE origination automation?

Regional variation matters because lenders often segment by state, market tier, property type, and legal requirements. A routing rule for multifamily in Texas may differ from one for rent-regulated assets in New York or hospitality in Florida. The agent should apply lender-specific geographic rules, not assume one intake standard works everywhere.

What should lenders measure after deploying AI agents in origination?

Track first-response time, percentage of inquiries routed without manual intervention, application completeness at first review, SLA breaches, broker follow-up volume, override rates, and the share of files escalated for exception review. Those metrics tell you whether the agent is reducing manual delay without hiding risk or creating rework somewhere else.