AI Underwriting for Private Lenders: What to Automate

Private CRE lenders can automate document intake, data standardization, covenant testing, and exception routing with relatively low risk. Credit judgment, sponsor assessment, market interpretation, and structuring decisions still need analyst review because they rely on incomplete information, context, and policy tradeoffs.

Private CRE underwriting is not one decision. It is a sequence: pulling data out of documents, checking spreads, testing covenants, routing exceptions, and then making an actual credit call. For ai underwriting for private lenders, the line is pretty clear. Automate the work you can test against source documents and policy rules. Keep analysts on anything that turns on market context, sponsor behavior, or real trade-offs in structure.

That line matters in 2026 because private lenders need faster turn times, but they still have to defend every conclusion in a file review. According to McKinsey research on the state of AI, organizations are using AI more heavily for workflow support, while regulated and high-stakes decisions still stay under human oversight. In CRE lending, that means faster underwriting assembly is realistic. Fully autonomous credit decisions are not.

Key Takeaways

- The safest underwriting automation sits in repeatable tasks: document classification, field extraction, data normalization, calculation checks, and exception routing.

- Analysts should keep control of sponsor assessment, market rent judgment, downside sizing, structure selection, and the final credit recommendation.

- According to the National Institute of Standards and Technology AI Risk Management Framework, higher-risk AI use needs governance, monitoring, and clear human accountability.

- The model that works is not analyst versus software. It is machine-first prep, with analyst sign-off on assumptions, exceptions, and credit conclusions.

- Private lenders that want broader automation usually need better controls first, including standardized credit memos, policy rules, document taxonomies, and audit logs.

AI underwriting for private lenders works best when automation is limited to repeatable, testable tasks

The practical test is simple: does the task have a verifiable input, a defined rule, and an auditable output? If yes, it is usually a good automation candidate. If the answer depends on reading incomplete facts and choosing between competing credit views, it belongs with an analyst.

According to the OECD AI Principles, trustworthy AI systems should be robust, transparent, and accountable. In private lending, that points to a narrow but useful role. AI can assemble an underwriting file, catch inconsistencies, and record why a case was escalated. It should not quietly replace the lender's credit committee logic.

That is the real difference between workflow support and delegated authority. A model can compare lease abstract dates, recalculate debt service coverage ratio, and flag missing guarantor financials. It cannot reliably judge whether a sponsor with three successful bridge executions in one market can pull off a fourth repositioning in a softer submarket with lease rollover nine months out.

For the broader operating model across origination, underwriting, and servicing, see ai agents for private commercial real estate lending.

What private lenders can automate safely in underwriting

Private lenders can automate underwriting tasks that are repetitive, rule-based, and traceable to source documents. The best use cases are prep and validation, not approval.

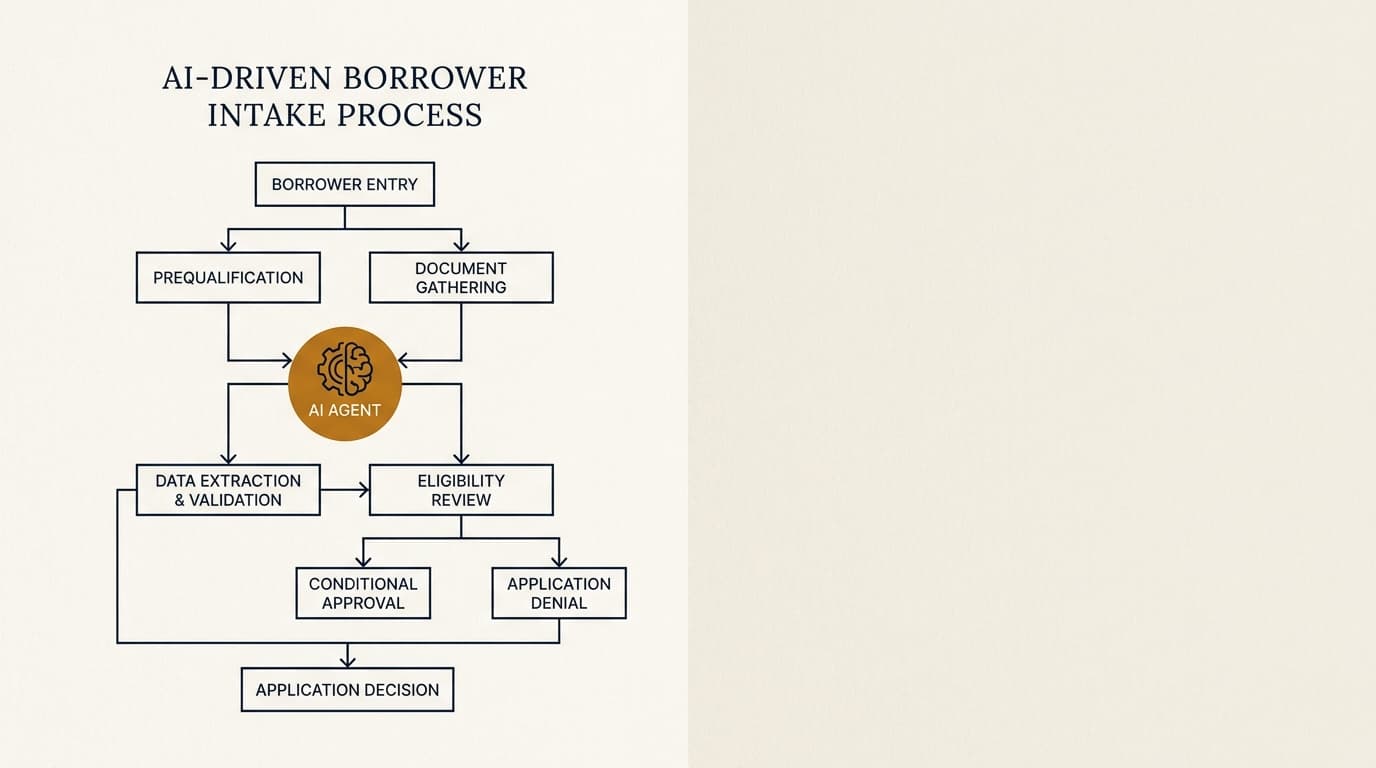

Document intake, classification, and data extraction

Document handling is often the first underwriting bottleneck because borrower packages show up incomplete, mislabeled, and all over the place. AI can classify rent rolls, trailing-12 financials, operating statements, appraisals, personal financial statements, and formation documents, then push the extracted fields into a standard template for review.

According to the Consumer Financial Protection Bureau guidance on service provider oversight, lenders still own vendor-controlled processes. That is why extraction needs confidence thresholds and review queues. In practice, private lenders can automate:

- File naming and document type recognition.

- Extraction of tenant names, lease dates, unit counts, base rent, reimbursements, and occupancy status.

- Borrower and guarantor entity matching across organizational documents.

- Detection of missing pages, duplicate files, and stale financial statements.

These workflows overlap with ai agents for cre document analysis and ai agents for borrower intake, but inside underwriting the payoff is speed and consistency. Analysts should review extracted values where confidence is low or where a mismatch changes sizing.

Spread calculations and policy checks

Calculation work is one of the safest areas to automate because formulas can be tested and version-controlled. AI should not invent assumptions, but it can recalculate metrics from lender-approved formulas and catch breaks between source files and the underwriting model.

Examples include:

- Recalculating debt service coverage ratio, loan-to-value ratio, debt yield, occupancy, and lease rollover concentrations.

- Checking whether taxes, insurance, reserves, and replacement capital were included in net operating income under policy.

- Comparing appraised value, purchase price, and as-is versus as-stabilized values for sizing exceptions.

- Flagging loans outside concentration limits by property type, geography, or sponsor exposure.

According to the Federal Reserve supervisory guidance on model risk management, effective controls include validation, change management, and clear limits on use. For private lenders, that means every automated metric should tie back to source inputs and lender-approved formulas, with a record of who overrode what.

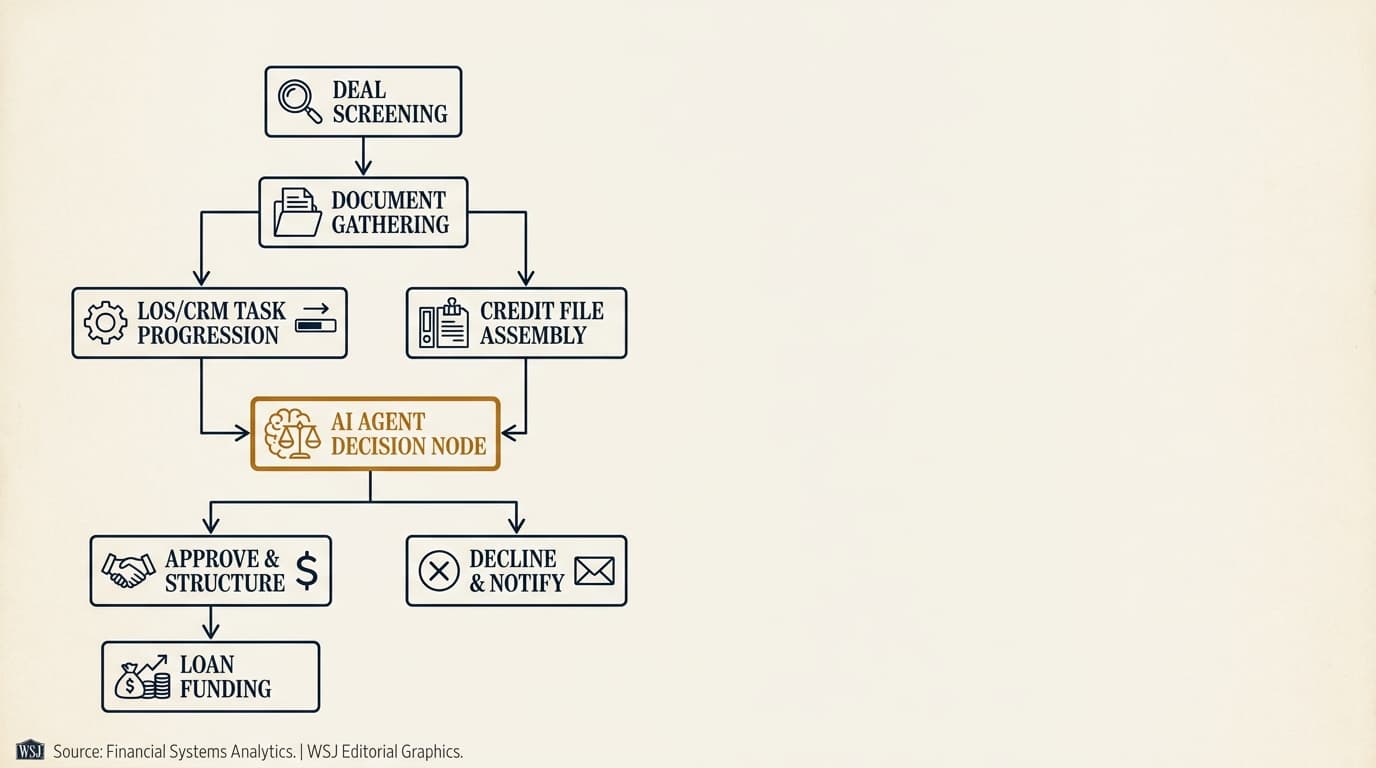

Exception routing and credit memo drafting

AI can route exceptions faster than most underwriting teams because it can compare a file against a policy matrix in seconds. It can also draft the non-analytical parts of a credit memo, as long as the output stays tied to source facts.

| Task | Safe to automate | Human review needed |

|---|---|---|

| Detect missing guarantor liquidity schedule | Yes | Confirm materiality if partial data exists |

| Flag DSCR below policy minimum | Yes | Assess whether compensating factors justify exception |

| Draft collateral summary from appraisal and rent roll | Yes | Verify unusual lease terms and market commentary |

| Assign final risk rating | No | Required |

The benefit is straightforward: better queue management. Junior analysts spend less time assembling files. Senior underwriters spend more time on assumptions and structure. Related workflow design issues are covered in ai agents for cre loan origination and cre loan origination workflow ai agent.

Which underwriting tasks still require analyst judgment

Analyst judgment still matters anywhere the job involves incomplete data, soft information, changing market conditions, or competing policy goals. In private credit underwriting, that is not a side case. That is the core of the work.

Sponsor quality and execution risk

Sponsor assessment is part documentary and part behavioral. Liquidity, net worth, recourse structure, and historical performance can be summarized automatically. The harder question is whether the sponsor can actually execute the business plan in the current market.

A debt fund evaluating a bridge loan on a 1980s suburban office conversion may have complete financial statements and still not be comfortable with execution risk. Analysts need to assess prior lease-up history, property management depth, refinance dependency, and whether the cost-to-complete numbers pass the smell test. No extraction engine can tell you if a sponsor's prior success in multifamily carries over to an office-to-medical repositioning.

Market rent, vacancy, and exit assumptions

Market assumptions require judgment because third-party data, broker opinions, and appraisal narratives often conflict. AI can summarize the material. It cannot safely choose the underwriting assumption without lender-specific context.

Take a lender sizing a small-balance industrial loan. Trailing occupancy may be 97%, submarket vacancy data may show 6.2%, and near-term rollover may sit with one tenant. The underwriting question is not which number is technically correct. It is which downside case the lender should use for sizing and extension risk. That belongs with an analyst or committee.

Loan structure, pricing, and covenant trade-offs

Structure decisions are negotiated credit judgments, not clerical output. A machine can suggest reserve levels or prepayment language based on past loans, but deciding whether to require cash management, springing reserves, partial recourse, or tighter extension tests depends on sponsor leverage, market liquidity, and the lender's risk appetite.

This is where a lot of implementations go too far. The software looks persuasive because it produces a recommendation. That is exactly the risk. Recommendations often bury assumptions nobody explicitly approved. Private lenders should treat structure suggestions as prompts, not decisions.

A practical division of labor for debt funds and nonbank CRE lenders

The operating model that tends to hold up is machine-first preparation and human-first judgment. Debt funds and nonbank lenders usually get better results when they separate assembly work from credit authority.

| Underwriting activity | Primary owner | Why |

|---|---|---|

| Collect and classify borrower documents | AI workflow | High-volume, repeatable, easy to audit |

| Extract rent roll and operating statement fields | AI workflow with QC | Structured but error-prone without review thresholds |

| Recalculate DSCR, LTV, debt yield, and exceptions | AI workflow | Formula-based and testable |

| Draft base credit memo sections | AI workflow plus analyst edit | Useful for speed, but source review is needed |

| Set market assumptions and stress cases | Analyst | Requires context and policy judgment |

| Assess sponsor strength and business-plan credibility | Analyst | Depends on qualitative information |

| Recommend structure and pricing | Senior underwriter or committee | Involves negotiated trade-offs and risk appetite |

For many lenders, this cuts the least valuable analyst work first: rekeying, reconciliation, and checklist administration. It does not weaken underwriting discipline. It puts that discipline where it actually matters.

Post-close, the same logic carries into ai agents for loan servicing and ai agents for portfolio monitoring, where routine monitoring can be automated but waivers, defaults, and workouts still need judgment.

How to implement AI underwriting for private lenders without weakening controls

Implementation works when lenders standardize policy logic before they automate it. A weak underwriting process does not get safer because it gets faster.

- Map the underwriting workflow and separate preparation tasks from decision tasks.

- Standardize document names, required fields, and credit memo sections.

- Define lender-approved formulas, policy thresholds, and escalation triggers.

- Set confidence thresholds for extraction and require review below those thresholds.

- Log every automated output, override, and user approval in an audit trail.

- Test the workflow on a closed-loan sample before using it on live deals.

- Review false positives, missed exceptions, and override patterns monthly.

According to the NIST AI Risk Management Framework Playbook, governance depends on documentation, testing, and ongoing monitoring. For private lenders, three controls matter most:

- Source traceability: every extracted figure should link back to the original document and page.

- Policy traceability: every exception flag should cite the specific policy threshold or formula used.

- Decision traceability: final recommendations should show where the analyst accepted, changed, or overrode the machine output.

Compliance and recordkeeping issues are discussed in more detail in ai agents for cre lending compliance, while a broader discussion of underwriting-specific controls appears in ai agents for cre underwriting.

Common failure points in AI underwriting for private lenders

Most failures here are process failures, not model failures. Private lenders usually get into trouble when they automate messy inputs, leave assumptions unstated, or fail to govern exceptions.

The most common failure points are:

- Unstructured policy rules: if exceptions live only in senior underwriters' heads, the system cannot route them reliably.

- Poor document hygiene: stale rent rolls, scanned images, and mixed reporting periods produce bad spreads no matter how good the model is.

- Invisible overrides: if analysts can change figures without a reason code, the audit trail is broken.

- Over-automation of narrative conclusions: drafted memos can sound finished while hiding weak assumptions.

- No post-close feedback loop: lenders that do not compare underwritten assumptions against actual performance never improve the rules or prompts.

The useful benchmark is not whether the system sounds smart. It is whether the lender can explain, document, and reproduce every output in a file review.

Frequently Asked Questions

What is ai underwriting for private lenders?

AI underwriting for private lenders uses software to automate repeatable underwriting tasks such as document classification, data extraction, financial spreading, policy checks, and exception routing. In practice, private CRE lenders still keep analysts responsible for assumptions, sponsor assessment, structure, and final credit decisions.

Which underwriting tasks can private CRE lenders automate with the lowest risk?

The lowest-risk tasks are document intake, rent roll and operating statement extraction, formula-based metric calculations, checklist completion, and policy exception flags. These tasks are relatively safe because they can be tested against source documents and lender-defined rules. Final risk ratings, market assumptions, and structure decisions should stay with human underwriters.

Does AI underwriting reduce headcount for debt funds?

Usually, it reduces manual assembly work before it reduces underwriting roles. Debt funds typically use AI to shorten turn times, support junior analysts, and improve consistency across files. In smaller shops, that may delay additional hiring. In larger platforms, it often shifts analyst time away from data entry and toward credit analysis and committee prep.

Are there regional differences in how private lenders should use AI underwriting?

Yes. Regional differences matter most when market data quality varies. In gateway markets with deeper transaction and lease data, automated summaries may be more reliable for screening. In tertiary markets, sparse comps and uneven appraisal support make analyst judgment more important on rents, vacancy, exit cap rates, and sponsor execution assumptions.

How should private lenders document AI underwriting decisions for audits and investor reporting?

Private lenders should preserve the source document, extracted fields, calculation logic, policy rule triggered, analyst override history, and final approver for each material underwriting output. That record is more useful than a generic system log because it lets an internal auditor, warehouse lender, or investor reconstruct how the underwriting conclusion was reached.